Approaches to Increasing Treatment Integrity

Detrich, R., States, J. & Keyworth, R. (2020). Approaches to Increasing Treatment Integrity. Oakland, CA: The Wing Institute. https://www.winginstitute.org/treatment-integrity-strategies

View this Overview as PDF

There are two general approaches to increasing treatment integrity. Educators can arrange either (a) antecedent conditions so that interventions are more likely to be implemented well or (b) consequences to encourage high-quality implementation. In reality, both approaches are likely necessary. Staff training is the most common antecedent approach, and performance feedback the most common consequent strategy. These and other methods are discussed below.

Antecedent Approaches

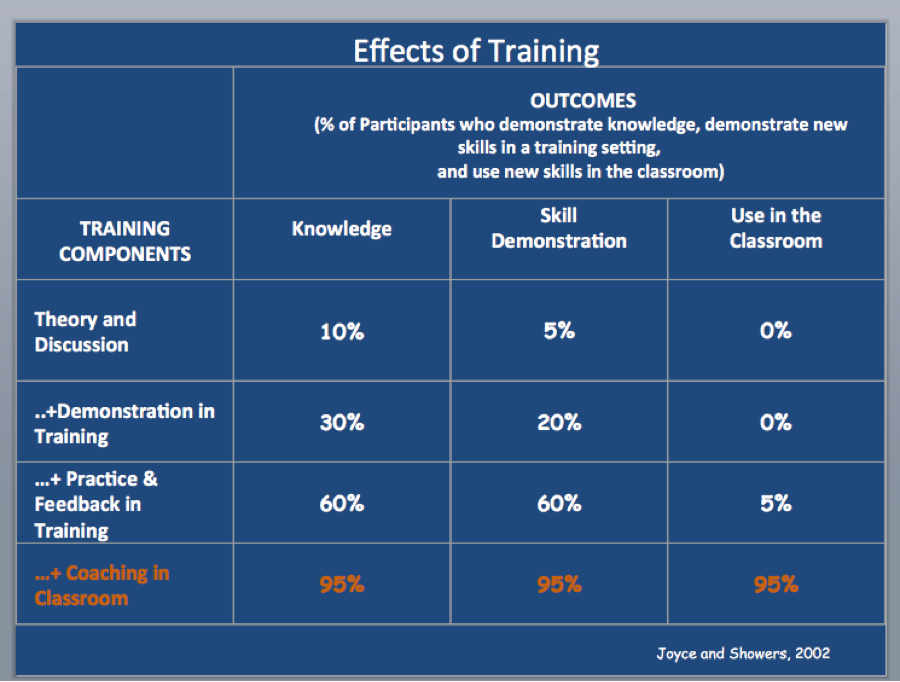

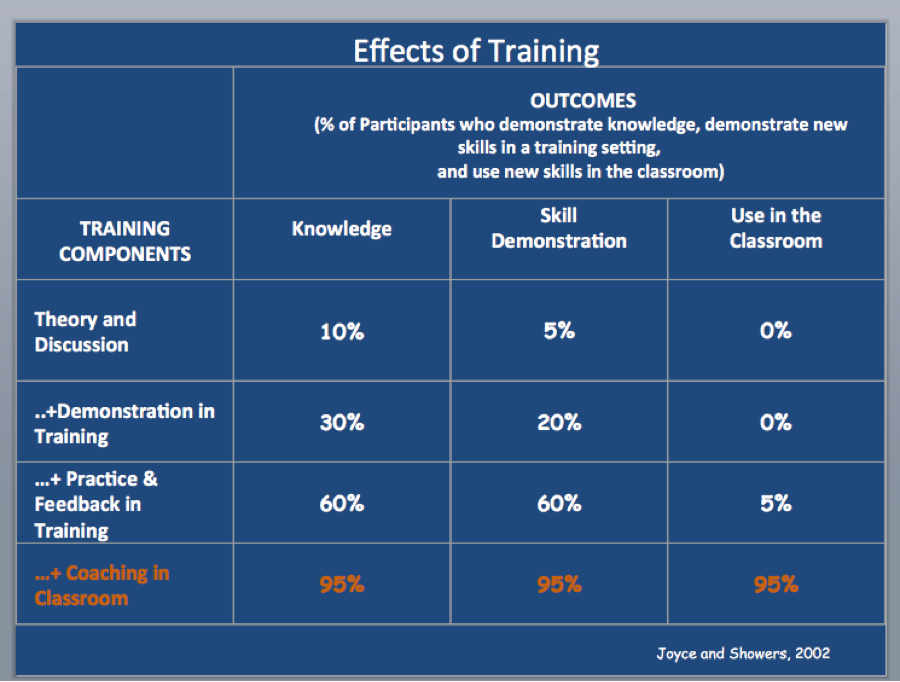

Staff training. Although staff training is easily the most common approach to ensuring that those responsible for implementation know what is expected of them, it is a very broad category and not all approaches are equally effective. Joyce and Showers (2002) completed a systematic review of professional development in education; the data are summarized in Figure 1below.

Figure 1. The effectiveness of various approaches to staff training

If you accept that the primary function of any effort to improve the quality of implementation is ultimately measured by the degree that the skills taught in training actually occur in the classroom, then, as Figure 1 illustrates, the most effective means of ensuring implementation is an approach that involves coaching and feedback in the classroom. The usual approaches to staff training, which include lecture, demonstration, and behavioral rehearsal without coaching in class, result in very little generalization to the classroom.

Training in the details of an intervention is necessary, but it is not sufficient to ensure high levels of treatment integrity. Much of the treatment integrity literature suggests that soon after training ends and implementation begins, treatment integrity begins to decline, even if implementers have demonstrated they can implement the intervention with 100% accuracy during training (Mortenson & Witt, 1998; Noell, Duhon, Gatti, & Connell, 2002; Noel et al., 2005).

Video modeling. In video modeling, a teaching approach in which a competent person is recorded performing a skill, the learner watches the video and then imitates the video model. Video modeling has been used to increase the quality of implementation of intervention plans in a small number of studies (Collins, Higbee, & Salzberg, 2009; DiGennaro-Reed, Codding, Catania, & Maguire, 2010; Hawkins & Heflin, 2011). All of these studies focused on the adherence dimension of treatment integrity. Hawkins and Heflin used video modeling and visual performance feedback to increase teachers’ use of behavior-specific praise. The results of this study are a bit unclear. Because video modeling and visual performance feedback were combined in a package to increase treatment integrity, sorting out the effects of the two elements is difficult. Although the combination is problematic from a research perspective, from a practice perspective the important effect is that the package increased treatment integrity. DiGennaro-Reed and colleagues (2010) examined the effects of video modeling on implementation of behavioral interventions and found that it produced improved but variable effects. When performance feedback was added to video modeling, treatment integrity was high and stable. These data suggest that performance feedback may be necessary to maximize the benefits of video modeling. In their investigation of video modeling as a means to improve the implementation of a problem-solving intervention, Collins and colleagues (2009) reported that video modeling alone resulted in stable performance across time and generalized to novel situations. The reason for the difference in outcomes needs to be examined. However, from a practice perspective video modeling has the potential to be an effective and efficient approach to increasing treatment integrity.

Among the efficiencies of video modeling is that once a standard video model of implementation is developed, it can be applied across individual teachers to increase implementation. Performance feedback can be added as needed. It may be that not all teachers will require feedback to maintain stable performance. Another advantage of video modeling is that individual teachers can view the video as needed without having to coordinate their schedules with other teachers and staff. The addition of performance feedback increases the cost, but it may be less costly than a performance feedback system without video modeling. If not all teachers require feedback, then the costs will be significantly reduced compared with a performance feedback only system.

Increasing motivation. Often, implementers are not motivated to implement an intervention because they find it unacceptable in some way. Extensive literature on treatment acceptability suggests that variables such as effort and time required to implement as well as compatibility of the intervention with the implementer’s perspective about appropriate intervention can influence the acceptability of interventions (Elliott, 1988; Miltenberger, 1990; Reimers, Wacker, & Koeppl, 1987). Any of these variables can influence the implementer’s willingness to implement the intervention as planned.

Contextual fit (a measure of how well an intervention integrates into the existing structures and routines of a classroom as well as the resources required to implement) has been described as a critical feature if interventions are to be implemented with integrity (Albin, Lucyshyn, Horner, & Flannery, 1996; Detrich, 1999). In a review of variables influencing the adoption of interventions, Riley-Tillman and Chafouleas (2003) argued that interventions requiring small adaptations are more likely to be adopted and sustained than interventions requiring significant changes to existing systems.

In a related study by Benazzi, Horner, and Good (2006), the technical adequacy and contextual fit of interventions developed by intervention teams with no behavior specialists on the team, by behavior specialists alone, and by teams that included a behavior specialist were assessed. The results were that technical adequacy was rated high if the behavior specialist alone or a team with a behavior specialist developed the intervention. Contextual fit was rated high when teams without a behavior specialist or teams with a behavior specialist developed the intervention. Plans developed by teams without a behavior specialist or teams with a behavior specialist were preferable to intervention plans developed by a behavior specialist alone. Although this study did not directly measure treatment integrity, it suggested that plans developed by those who have an understanding of the culture of the school and the classroom are more acceptable and therefore more likely to be implemented than plans that do not attend to these contextual variables. These data suggest that increasing contextual fit increases the motivation to implement, but this aspect needs to be directly and empirically evaluated.

Implementation planning. One antecedent approach to increasing contextual fit is implementation planning (Sanetti, Collier-Meek, Long, Kim, & Kratochwill, 2014). It involves identifying barriers and planning logistically for how an intervention will be implemented. The teacher responsible for implementation is very involved in the planning process, and that teacher and the intervention specialist such as a behavior analyst make decisions about how to adapt interventions to better fit the local context. In a study evaluating the implementation planning process, Sanetti and colleagues (2014) reported that both adherence and quality increased following implementation planning. In this instance, implementation planning was introduced following training and low levels of implementation. In practice, it is appropriate to use the implementation planning process when an intervention is initially being developed, thus ensuring that logistical issues and barriers are addressed and that implementation is high from the beginning of the intervention.

Choice. Another technique for increasing motivation to implement with integrity is to let the implementer choose the components of the intervention. Anderson and Daly (2013) allowed teachers to choose intervention components from a list of evidence-based strategies and to develop and implement their own interventions as well as an intervention developed by an expert. Then they compared the level of treatment integrity for the teacher-developed intervention with that for the intervention developed by an expert. Treatment integrity and student outcomes were better when the teachers implemented the intervention they developed compared with the expert-developed intervention.

A body of literature suggests that choice has reinforcing effects (Vaughn & Horner, 1997). It may well be that giving implementers a menu of options will be sufficiently reinforcing to increase the level of treatment integrity. At the very least, implementers are likely to choose elements of interventions that are the best contextual fit for their setting. In a study by Kern and colleagues (Kern, Childs, Dunlap, Clarke, & Falk, 1994), teachers were allowed to select intervention strategies that had been shown through preference assessments to influence student behavior. The teachers selected interventions that best fit their classroom routines and structures, and these teacher-selected interventions resulted in improved student behavior. There were no direct measures of treatment integrity in this study, so how well the teachers implemented the intervention is unknown, although it can be argued they implemented it well enough to have a positive impact on student behavior.

These data, along with the Anderson and Daly (2013) study, lead to the conclusion that those responsible for implementing an intervention should be involved in developing it. This likely has two benefits. It increases the face validity of the intervention, and it probably makes the intervention a better contextual fit. Elliott (1988) suggested that interventions are rated more acceptable if they are perceived to be effective. Among the variables that influence the perception of effectiveness is the degree to which an intervention is consistent with the implementer’s perspectives about appropriate treatment.

Consequence Strategies

Performance feedback. The most common strategy for increasing treatment integrity is performance feedback (Auld, Belfiore, & Scheeler, 2010; Barton, Pribble, & Chen, 2013; Burns, Peters, & Noell, 2008; Casey & McWilliam, 2011; Noell & Gansle, 2014). Feedback has been used to increase single elements of an intervention plan such as differential reinforcement (Auld, Belifore, & Scheeler, 2010); to improve the overall implementation of academic interventions (Mortenson & Witt, 1998); to improve the implementation of behavior support plans (Codding, Feinberg, Dunn, & Pace 2005); and to improve the implementation of decision protocols by school-based problem-solving teams (Bartels & Mortenson, 2005; Burns, Peters, & Noell, 2008; Duhon, Mesmer, Gregerson, & Witt, 2009).

There are several effective means for delivering feedback: face-to-face (Mortenson & Witt, 1998); email (Barton, Pribble, & Chen, 2013); and graphed (Zoder-Martell et al., 2013). Some data suggest that verbal feedback paired with graphed feedback results in better implementation than verbal feedback alone (Sanetti, Luiselli, & Handler, 2007; Zoder-Martell et al., 2013).

Although performance feedback is unquestionably an effective means for increasing the quality of implementation of interventions, in service settings it poses some potential problems. As a basis for feedback, it usually requires direct observation of those responsible for implementation. Implementation in a public school requires consideration of which personnel should observe and give feedback. One of the challenges is the frequency with which feedback must be given. A number of studies suggest that feedback once per week is sufficient to maintain high levels of treatment integrity (Mortenson & Witt, 1998; Noell et al., 2005). In most of the published studies on performance feedback, external consultants provided the feedback. One study examined the effects of performance feedback provided by school personnel (Sanetti, Fallon, & Collier-Meek, 2013). If school personnel are to provide feedback to teachers, protocols demand consistent feedback. These data point to the need for a system that makes certain those responsible for monitoring treatment integrity are following protocols. Failure to do results in poor implementation of the performance monitoring system, which in turn results in inadequate implementation of the intervention by teachers. (A systems approach will be discussed in more detail in A Multitiered System of Support for Teachers, below.)

Coaching in the Classroom. In many ways, coaching in the classroom is a continuation of training with coaching, which occurs in an instructional setting removed from the implementation setting. Coaching in the classroom also involves performance feedback. Knight (2007) provided a clear description of what is required to make coaching effective. Joyce and Showers (2002) showed that training without coaching results in very little impact in the classroom, suggesting that coaching in the classroom is an essential component of training. Reinke, Stormont, Herman, and Newcomer (2014) demonstrated the necessity of performance feedback as part of the coaching process. In this study, teachers who received more performance feedback had higher levels of implementation than teachers who received less feedback. Similarly, teachers who received more coaching in the classroom after a low baseline level of implementation achieved higher levels of implementation than teachers who received less coaching.

Just as with performance feedback, coaching in the classroom has limitations. It is resource intensive, and most schools do not have a sufficient pool of personnel able to coach. The personnel constraints may limit a school district’s ability to scale up coaching in the classroom. Knight (2007) described coaching as a partnership between the coach and the teacher. An effective coach requires two broad skill sets. First, the coach must have the technical expertise to problem solve all of the issues that arise during implementation in a classroom. Second, the coach must have the necessary social influence skills to form a partnership with and to be perceived as credible by the teacher (Knight, 2007; Rogers, 2010). The benefits of coaching are minimized when the coach is not perceived as technically competent and credible.

Self-monitoring. Teachers self-monitoring their implementation has considerable appeal because, if effective, it is a relatively inexpensive approach to increasing treatment integrity. By comparison, both performance feedback and coaching are relatively high cost but have a well-established record for effectiveness, at least under research conditions. Self-monitoring is among the less well-researched strategies that hold promise. To date, the research literature has not provided empirical support for this strategy, although some data suggest ways of improving the effectiveness of self-monitoring.

Sanetti and colleagues (2013) evaluated the efficacy of the daily report card as a means of increasing treatment integrity. They compared effects of teachers verbally reporting their implementation versus providing a written self-report. These reports of implementation occurred either daily or weekly. Teachers who reported daily had higher levels of treatment integrity than those who reported weekly, but the differences were not statistically significant. Similarly, written reports of implementation resulted in slightly higher levels of treatment integrity than verbal reports, but, again, the results were not statistically significant. In spite of these results, self-evaluation approaches such as the daily report card continue to hold promise. The data from this study suggest that frequent, written evaluations may ultimately prove useful, but more research needs to be conducted before educators add self-evaluation strategies to their empirically supported efforts to increase treatment integrity.

A Multitiered System of Support for Teachers

Among the challenges of ensuring that interventions are implemented with integrity are these two factors: (a) Often, many individuals are responsible for implementation, and (b) limited resources are available to assess how well interventions are being implemented. One promising systems approach to ensuring higher levels of treatment integrity is a multitiered system of support for teachers (Myers, Simonsen, & Sugai, 2011; Sanetti & Collier-Meek, 2015). In this approach, all teachers are exposed to some effort to ensure high-quality implementation (tier 1), most often staff training. No additional action is required for teachers who respond to this level of support and implement with integrity. For those teachers who do not implement with adequate integrity, a tier 2 intervention, such as performance feedback or implementation planning, is put in place. Finally, some teachers will require an even more intensive intervention (tier 3), such as coaching, in order to implement with sufficient integrity. The appeal of this approach is that teachers receive only the level of support they need to be effective. The presumption is that as the interventions to support teachers increase in intensity across tiers of support, fewer teachers will require services.

In the study by Sanetti and Collier-Meek (2015), two of the six teachers required only tier 1 support. An additional two teachers were able to implement with integrity following tier 2 support. Finally, two teachers required the most intensive level of intervention (tier 3) to implement with integrity. Such an approach allows resources to be allocated based on identified need. This type of data-based, systematic approach to ensuring treatment integrity allows schools and school districts to scale up monitoring and to influence treatment integrity by using resources in an efficient and effective manner.

An implicit assumption of the multitiered system of support is that teachers must implement the intervention with integrity and those supporting teachers through staff training and other efforts must implement the support plan with integrity. There is some evidence that support plans are not always implemented with integrity (Sanetti, Fallon, & Collier-Meek, 2013). If this is the case, then it will be necessary for someone in the system to monitor the integrity of support plan implementation. Of course, then some part of the system will have to be responsible for making sure that the support plan for those supporting the teachers is implemented with integrity. Ultimately, treatment integrity is a systems problem and can only be solved by ensuring that each level of the system is involved in some capacity to make certain that interventions are implemented well in the classroom.

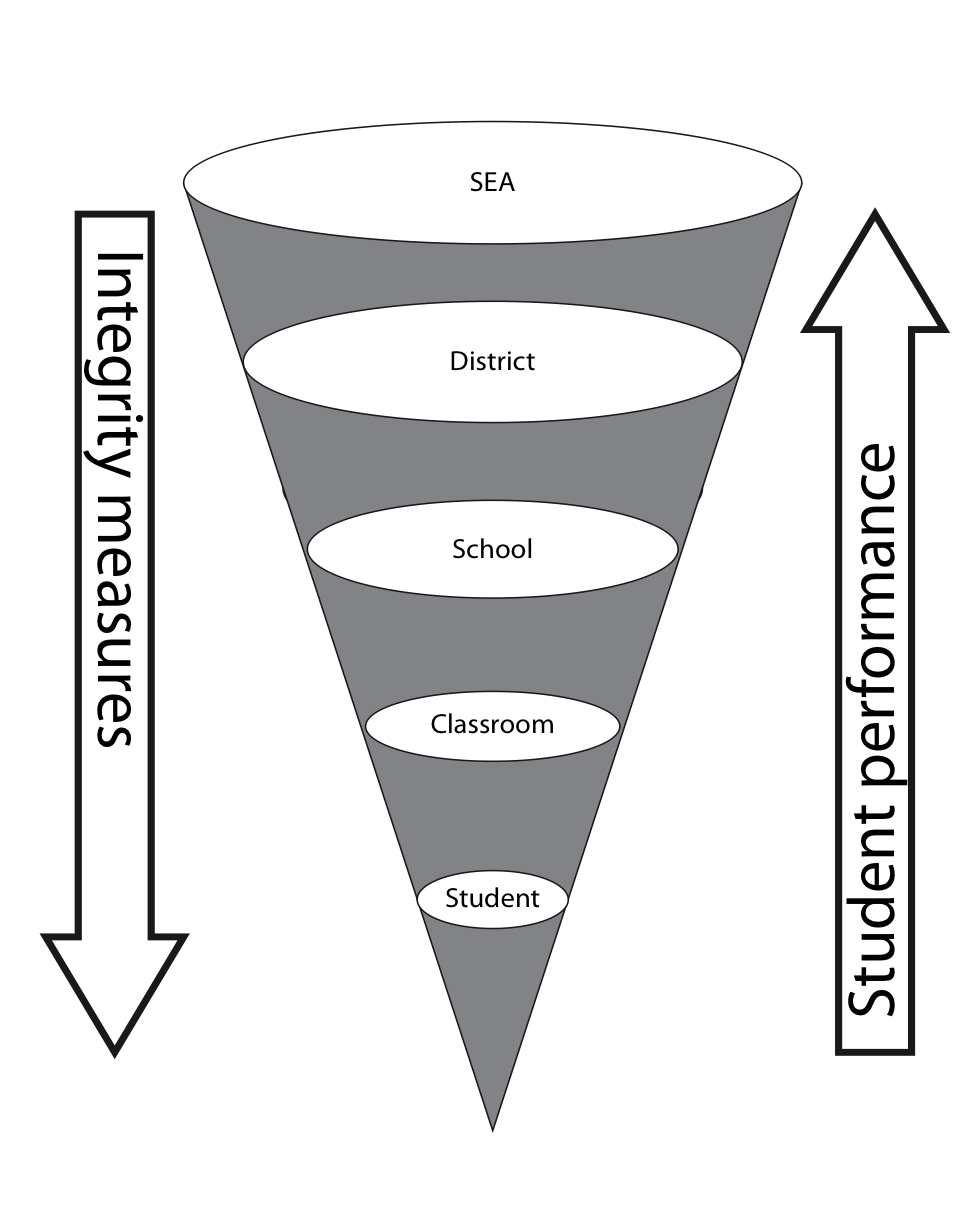

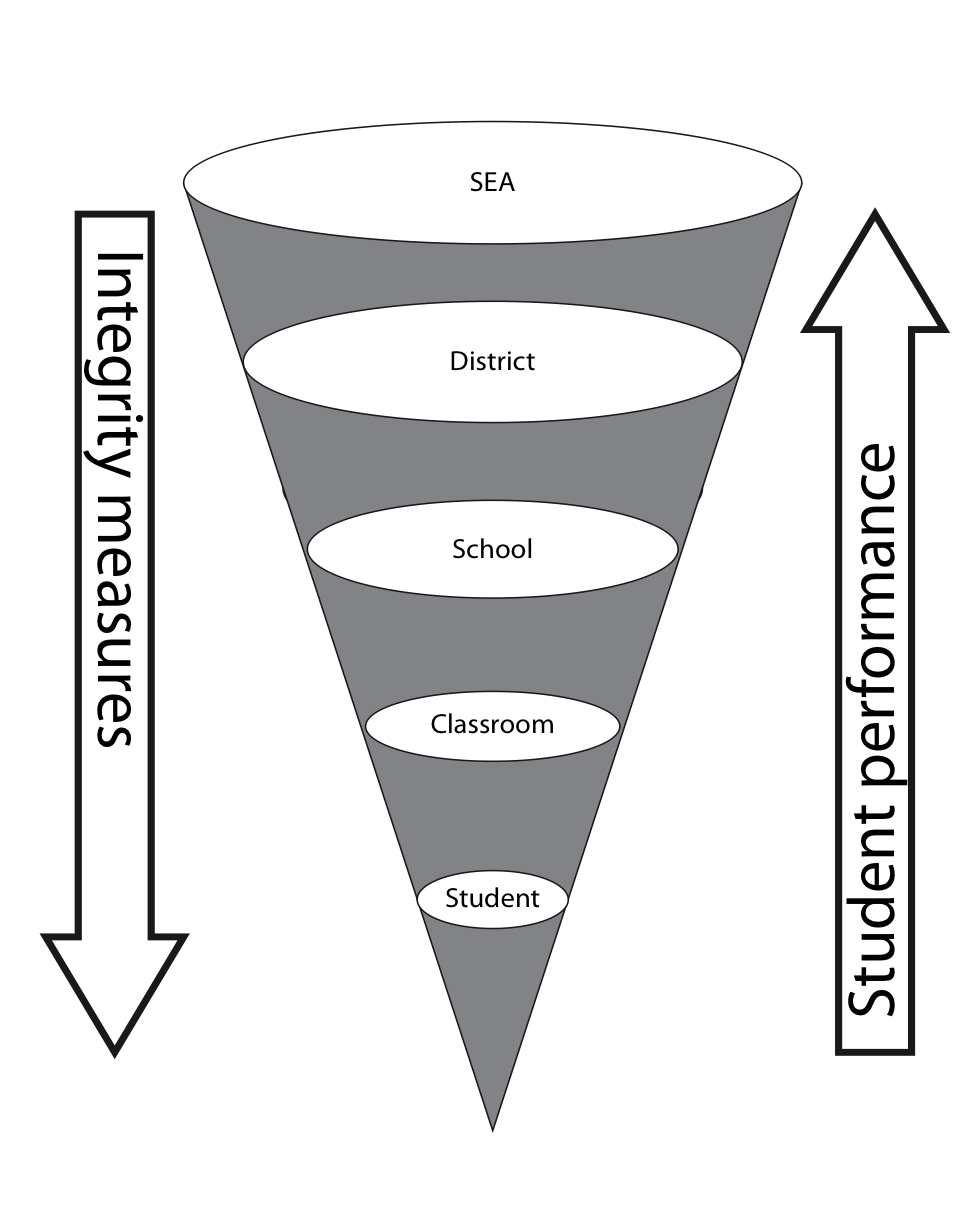

Detrich (2013) has proposed a data-based systems approach that involves all levels of the system to maintain high quality of implementation. As shown in Figure 5, two types of data are required in a systems approach to treatment integrity.

Figure 5. A data-based systems approach to treatment integrity

Data about student performance rolls up into larger and larger units of aggregated data. The classroom teacher is concerned about the performance of individual students in his or her class and the class as a whole. At the school level, the concern is with the performance of each individual class and the school. These data can be meaningfully interpreted only if something is known about how well the classroom teacher implemented the interventions in the classroom and how well those responsible for supporting implementation by the teacher implemented the support plan. This same logic applies across all levels of the system.

Whereas data about student performance rolls up across levels of the system, data about quality of implementation flows down across the different levels of the system. The state education agency provides data to the school district. At the district level, data is used as feedback to schools about how well they are implementing the teacher support plan. The school provides data to classroom teachers about how well they are implementing interventions in their classes.

Both student performance data and treatment integrity data are necessary. Student performance data without treatment integrity data do not allow us to make any conclusions about student performance because we do not know how well the intervention was implemented. Treatment integrity data without student performance data do not allow us to make any decisions about the effectiveness of the intervention.

This systems approach highlights the fact that what happens in an individual classroom is the responsibility of every level of the system. If any level of the system fails to support high-quality implementation, then it is likely that student performance will be negatively impacted and the system will fail in its primary responsibility to students.

References

Albin, R. W., Lucyshyn, J. M., Horner, R. H., & Flannery, K. B. (1996). Contextual fit for behavioral support plans: A model for “goodness of fit.” In L. K. Koegel, R. L. Koegel, & G. Dunlap (Eds.) Positive behavioral support: Including people with difficult behavior in the community (pp. 81–98). Baltimore, MD: Paul H. Brookes.

Andersen, M., & Daly, E. J. (2013). An experimental examination of the impact of choice of treatment components on treatment integrity. Journal of Educational and Psychological Consultation, 23(4), 231–263.

Auld, R. G., Belfiore, P. J., & Scheeler, M. C. (2010). Increasing pre-service teachers’ use of differential reinforcement: Effects of performance feedback on consequences for student behavior. Journal of Behavioral Education, 19(2), 169–183.

Bartels, S. M., & Mortenson, B. P. (2005). Enhancing adherence to a problem-solving model for middle-school pre-referral teams: A performance feedback and checklist approach. Journal of Applied School Psychology, 22(1), 109–123.

Barton, E. E., Pribble, L., & Chen, C.-I. (2013). The use of e-mail to deliver performance-based feedback to early childhood practitioners. Journal of Early Intervention, 35(3), 270–297.

Benazzi, L., Horner, R. H., & Good, R. H. (2006). Effects of behavior support team composition on the technical adequacy and contextual fit of behavior support plans. Journal of Special Education, 40(3), 160–170.

Burns, M. K., Peters, R., & Noell, G. H. (2008). Using performance feedback to enhance implementation fidelity of the problem-solving team process. Journal of School Psychology, 46(5), 537–550.

Casey, A. M., & McWilliam, R. A. (2011). The characteristics and effectiveness of feedback interventions applied in early childhood settings. Topics in Early Childhood Special Education, 31(2), 68–77.

Codding, R. S., Feinberg, A. B., Dunn, E. K., & Pace, G. M. (2005). Effects of immediate performance feedback on implementation of behavior support plans. Journal of Applied Behavior Analysis, 38(2), 205–219.

Collins, S., Higbee, T. S., & Salzberg, C. L. (2009). The effects of video modeling on staff implementation of a problem-solving intervention with adults with developmental disabilities. Journal of Applied Behavior Analysis, 42(4), 849–854.

Detrich, R. (1999). Increasing treatment fidelity by matching interventions to contextual variables within the educational setting. School Psychology Review, 28(4), 608–620.

Detrich, R. (2013). Innovation, implementation science, and data-based decision making: Components of successful reform. In M. Murphy, S. Redding, & J. Twyman (Eds.). Handbook on Innovations in Learning (pp. 33–49). Charlotte, NC: Information Age Publishing.

DiGennaro-Reed, F. D., Codding, R., Catania, C. N., & Maguire, H. (2010). Effects of video modeling on treatment integrity of behavioral interventions. Journal of Applied Behavior Analysis, 43(2), 291–295.

Duhon, G. J., Mesmer, E. M., Gregerson, L., & Witt, J. C. (2009). Effects of public feedback during RTI team meetings on teacher implementation integrity and student academic performance. Journal of School Psychology, 47(1), 19–37.

Elliott, S. N. (1988). Acceptability of behavioral treatments: A review of variables that influence treatment selection. Professional Psychology: Research and Practice, 19(1), 68–80.

Hawkins, S. M., & Heflin, L. J. (2011). Increasing secondary teachers’ behavior-specific praise using a video self-modeling and visual performance feedback intervention. Journal of Positive Behavior Interventions, 13(2), 97–108.

Joyce, B. R., & Showers, B. (2002). Student achievement through staff development. Alexandria, VA: Association for Supervision and Curriculum Development (ASCD) Books.

Kern, L., Childs, K. E., Dunlap, G., Clarke, S., & Falk, G. D. (1994). Using assessment-based curricular intervention to improve the classroom behavior of a student. Journal of Applied Behavior Analysis, 27(1), 7-19.

Knight, J. (2007). Instructional coaching: A partnership approach to improving instruction. Thousand Oaks, CA: Corwin Press.

Miltenberger, R. G. (1990). Assessment of treatment acceptability: A review of the literature. Topics in Early Childhood Special Education, 10(3), 24–38.

Mortenson, B. P., & Witt, J. C. (1998). The use of weekly performance feedback to increase teacher implementation of a prereferral academic intervention. School Psychology Review, 27(4), 613–627.

Myers, D. M., Simonsen, B., & Sugai, G. (2011). Increasing teachers’ use of praise with a response-to-intervention approach. Education and Treatment of Children, 34(1), 35–59.

Noell, G. H., Duhon, G. J., Gatti, S. L., & Connell, J. E. (2002). Consultation, follow-up, and implementation of behavior management interventions in general education. School Psychology Review, 31(2), 217–234.

Noell, G. H., & Gansle, K. A. (2014). The use of performance feedback to improve intervention implementation in schools. In L. M. Sanetti & T. R. Kratochwill (Eds.), Treatment integrity: A foundation for evidence-based practice in applied psychology (pp. 161–184). Washington, DC: American Psychological Association.

Noell, G. H., Witt, J. C., Slider, N. J., Connell, J. E., Gatti, S. L., Williams, K. L., . . . Duhon, G. J. (2005). Treatment implementation following behavioral consultation in schools: A comparison of three follow-up strategies. School Psychology Review, 34(1), 87–106.

Reimers, T. M., Wacker, D. P., & Koeppl, G. (1987). Acceptability of behavioral interventions: A review of the literature. School Psychology Review, 16(2), 212–227.

Reinke, W., Stormont, M., Herman, K., & Newcomer, L. (2014). Using coaching to support teacher implementation of classroom-based interventions. Journal of Behavioral Education, 23(1), 150–167.

Riley-Tillman, T. C., & Chafouleas, S. M. (2003). Using interventions that exist in the natural environment to increase treatment integrity and social influence in consultation. Journal of Educational & Psychological Consultation, 14(2), 139–156.

Rogers, E. M. (2010). Diffusion of innovations (5th ed.). New York, NY: Simon & Schuster.

Sanetti, L. M. H., Chafouleas, S. M., O’Keeffe, B. V., & Kilgus, S. P. (2013). Treatment integrity assessment of a daily report card intervention: A preliminary evaluation of two methods and frequencies. Canadian Journal of School Psychology, 28(3), 261–276.

Sanetti, L. M. H., & Collier-Meek, M. A. (2015). Data-driven delivery of implementation supports in a multi-tiered framework: A pilot study. Psychology in the Schools, 52(8), 815–828.

Sanetti, L. M. H., Collier-Meek, M. A., Long, A. C. J., Kim, J., & Kratochwill, T. R. (2014). Using implementation planning to increase teachers’ adherence and quality to behavior support plans. Psychology in the Schools, 51(8), 879–895.

Sanetti, L. M. H., Fallon, L. M., & Collier-Meek, M. A. (2013). Increasing teacher treatment integrity through performance feedback provided by school personnel. Psychology in the Schools, 50(2), 134–150.

Sanetti, L. M. H., Luiselli, J. K., & Handler, M. W. (2007). Effects of verbal and graphic performance feedback on behavior support plan implementation in a public elementary school. Behavior Modification, 31(4), 454–465.

Vaughn, B. J., & Horner, R. H. (1997). Identifying instructional tasks that occasion problem behaviors and assessing the effects of student versus teacher choice among these tasks. Journal of Applied Behavior Analysis, 30(2), 299–312.

Zoder-Martell, K., Dufrene, B., Sterling, H., Tingstrom, D., Blaze, J., Duncan, N., & Harpole, L. (2013). Effects of verbal and graphed feedback on treatment integrity. Journal of Applied School Psychology, 29(4), 328–349.

View this Overview as PDF

TITLE

SYNOPSIS

CITATION

LINK

Treatment Integrity in the Problem-Solving Process Overview

Treatment integrity is a core component of data-based decision making (Detrich, 2013). The usual approach is to consider student data when making decisions about an intervention; however, if there are no data about how well the intervention was implemented, then meaningful judgments cannot be made about effectiveness.

Destination: Equity

This special issue of Strategies is devoted to highlighting this knowledge base and corresponding practices. In this issue you will find an in-depth case study of a school district engaged in systemic improvement, using the principles and practices of what Dr. Jackson calls the Pedagogy of Confidence®.

Introduction: Proceedings from the Wing Institute’s Sixth Annual Summit on Evidence-Based Education: Performance Feedback: Using Data to Improve Educator Performance.

This book is compiled from the proceedings of the sixth summit entitled “Performance Feedback: Using Data to Improve Educator Performance.” The 2011 summit topic was selected to help answer the following question: What basic practice has the potential for the greatest impact on changing the behavior of students, teachers, and school administrative personnel?

States, J., Keyworth, R. & Detrich, R. (2013). Introduction: Proceedings from the Wing Institute’s Sixth Annual Summit on Evidence-Based Education: Performance Feedback: Using Data to Improve Educator Performance. In Education at the Crossroads: The State of Teacher Preparation (Vol. 3, pp. ix-xii). Oakland, CA: The Wing Institute.

Coaching side by side: One-on-one collaboration creates caring, connected teachers

This article describes a school district administrator's research on optimal coaching experiences for classroom teachers. This research was done with the intent of gaining a better understanding of how coaching affects student learning.

Akhavan, N. (2015). Coaching side by side: One-on-one collaboration creates caring, connected

teachers. Journal of Staff Development, 36,34-37.

Best Practices in Collaborative Problem Solving for Intervention Design.

This chapter describes a process, collaborative problem solving, that can guide decision making and intervention planning for improving academic and behavior outcomes for students. The primary focus is on the two basic components of the term collaborative problem solving.

Allen, S. J., & Graden, J. L. (2002). Best Practices in Collaborative Problem Solving for Intervention Design.

Principal Talent Management According to the Evidence: A Review of the Literature

This literature review aims to provide district leaders with an understanding of the research and best evidence regarding the components of effective principal talent management systems.

Applying Positive Behavior Support and Functional Behavioral Assessments in Schools

The purposes of this article are to describe (a) the context in which PBS and FBA are needed and (b) definitions and features of PBS and FBA.

applying positive behavior support

Increasing Pre-service Teachers' Use of Differential Reinforcement: Effects of Performance Feedback on Consequences for Student Behavior.

This study evaluated the effects of performance feedback to increase the implementation of skills taught during in-service training.

Auld, R. G., Belfiore, P. J., & Scheeler, M. C. (2010). Increasing Pre-service Teachers’ Use of Differential Reinforcement: Effects of Performance Feedback on Consequences for Student Behavior. Journal of Behavioral Education, 19(2), 169-183.

The sustained use of research-based instructional practice: A case study of peer-assisted learning strategies in mathematics

This article explores factors influencing the sustained use of Peer Assisted Learning Strategies (PALS) in math in one elementary school.

Baker, S., Gersten, R., Dimino, J. A., & Griffiths, R. (2004). The sustained use of research-based instructional practice: A case study of peer-assisted learning strategies in mathematics. Remedial and Special Education, 25(1), 5-24.

The Use of E-Mail to Deliver Performance-Based Feedback to Early Childhood Practitioners.

This study evaulates the effects of performance feedback as part of proffessional development across three studies.

Barton, E. E., Pribble, L., & Chen, C.-I. (2013). The Use of E-Mail to Deliver Performance-Based Feedback to Early Childhood Practitioners. Journal of Early Intervention, 35(3), 270-297.

Revisiting the Effect of Teaching of Learning Strategies on Academic Achievement: A Meta-Analysis of the Findings

The purpose of this research was to examine the effect of teaching of learning strategies on academic achievement of students. The meta-analysis model was adopted to examine the effectiveness of teaching of learning strategies on academic achievement. According to moderator analyses, it was found that there was no significant difference between effect sizes of the studies in terms of sample size, publication type, course type, implementation duration, instructional level, school setting, and socioeconomic status

Bas, G., & Beyhan, Ö. (2019). Revisiting the Effect of Teaching of Learning Strategies on Academic Achievement: A Meta-Analysis of the Findings. International Journal of Research in Education and Science, 5(1), 70-87.

First Step to Success: An Early Intervention for Elementary Children at Risk for Antisocial Behavior

The purpose of this study was to examine the effects of an early intervention strategy, First Step to Success, involving (a) teacher-directed and (b) a combination of teacher- and parent-directed strategies on the behaviors of elementary school children at risk for antisocial behavior.

Beard, K. Y., & Sugai, G. (2004). First Step to Success: An early intervention for elementary children at risk for antisocial behavior. Behavioral Disorders, 29(4), 396-409.

Questioning the Author: An approach for enhancing student engagement with text

The book presents many examples of Questioning the Author (QtA) in action as children engage with narrative and expository texts to construct meaning.

Beck, I. L., & McKeown, M. G., Hamilton, R. L., & Kugan, L. (1997). Questioning the Author: An approach for enhancing student engagement with text.Newark, DE: International Reading Association.

Beyond Monet: The artful science of instructional integration.

This book delivers teaching practice highlights and some strategies introduced in schools to give educators, evaluators, and researchers comprehensive evidence found on the best instructional strategies schools could use to improve student outcomes significantly.

Bennett, B., Rolheiser, C., & Normore, A. H. (2003). Beyond monet: The artful science of instructional integration. Alberta Journal of Educational Research, 49(4), 383.

Putting the pieces together: An Integrated Model of program implementation

One of the primary goals of implementation science is to insure that programs are implemented with integrity. This paper presents an integrated model of implementation that emphasizes treatment integrity.

Berkel, C., Mauricio, A. M., Schoenfelder, E., Sandler, I. N., & Collier-Meek, M. (2011). Putting the pieces together: An Integrated Model of program implementation. Prevention Science, 12, 23-33.

Classwide peer tutoring: An effective strategy for students with emotional and behavioral disorders.

This paper discuss ClasWide Peer Tutoring as an effective strategy for Student with Emotional and Behavioral Disorder

Bowman-Perrott, L. (2009). Classwide peer tutoring: An effective strategy for students with emotional and behavioral disorders. Intervention in School and Clinic, 44(5), 259-267.

Response to intervention: Principles and strategies for effective practice

This book provides practitioners with a complete guide to implementing response to intervention (RTI) in schools.

Brown-Chidsey, R., & Steege, M. W. (2011). Response to intervention: Principles and strategies for effective practice. Guilford Press.

Response to intervention: Principles and strategies for effective practice

This book provides practitioners with a complete guide to implementing response to intervention (RTI) in schools.

Brown-Chidsey, R., & Steege, M. W. (2011). Response to intervention: Principles and strategies for effective practice. Guilford Press.

Using performance feedback to enhance implementation fidelity of the problem-solving team process

This study examines the importance of implementation integrity for problem-solving teams (PST) and response-to-intervention models.

Burns, M. K., Peters, R., & Noell, G. H. (2008). Using performance feedback to enhance implementation fidelity of the problem-solving team process. Journal of School Psychology, 46(5), 537-550.

The effects of school-based decision-making on educational outcomes in low- and middle-income contexts: a systematic review

This review assesses the effectiveness of school-based curricula, finance, management, and teacher’s decision-making. This report has implications for the impact of charter schools, as the primary intervention in this model is local control. The report finds limited evidence of the effectiveness of these reforms, especially from low-income countries.

Carr-Hill, R., Rolleston, C., Pherali, T., & Schendel, R. (2014). The effects of school-based decision making on educational outcomes in low-and middle-income contexts: A systematic review.

Graphical Feedback to Increase Teachers' Use of Incidental Teaching.

Incidental teaching is often a component of early childhood intervention programs. This study evaluated the use of grahical feedback to increase the use of incidental teaching.

Casey, A. M., & McWilliam, R. A. (2008). Graphical Feedback to Increase Teachers’ Use of Incidental Teaching. Journal of Early Intervention, 30(3), 251-268.

The impact of checklist-based training on teachers' use of the zone defense schedule.

One of the challenges for increasing treatment integrity is finding effective methods for doing so. This study evaluated the use of checklist-based training to increase treatment integrity.

Casey, A. M., & McWilliam, R. A. (2011). The impact of checklist-based training on teachers’ use of the zone defense schedule. Journal of Applied Behavior Analysis, 44(2), 397-401.

Data Matters: Using Chronic Absence to Accelerate Action for Student Success

The report provides recommendations and strategies for managing chronic absenteeism at all levels of education leadership, from state agencies through individual schools. It also has an interactive web site where the reader can drill down on specific data at all levels of the education system. www.attendanceworks.org

Chang, Hedy N., Bauer, Lauren and Vaughan Byrnes, Data Matters: Using Chronic Absence to Accelerate Action for Student Success, Attendance Works and Everyone Graduates Center, September 2018.

Using Performance Feedback To Decrease Classroom Transition Time And Examine Collateral Effects On Academic Engagement.

This study evaluated the impact of performance feedback on how well problem-solving teams implemeted a structured decision-making protocal. Teams performed better when feedback was provided.

Codding, R. S., & Smyth, C. A. (2008). Using Performance Feedback To Decrease Classroom Transition Time And Examine Collateral Effects On Academic Engagement. Journal of Educational & Psychological Consultation, 18(4), 325-345.

Effects of Immediate Performance Feedback on Implementation of Behavior Support Plans.

This study investigated the effects of performance feedback to increase treatment integrity.

Codding, R. S., Feinberg, A. B., & Dunn, E. K. (2005). Effects of Immediate Performance Feedback on Implementation of Behavior Support Plans. Journal of Applied Behavior Analysis, 38(2), 205-219.

Using Performance Feedback to Improve Treatment Integrity of Classwide Behavior Plans: An Investigation of Observer Reactivity

This study evaluated the effects of performance feedback in increasing treatment integrity. It also evaluated the possible reactivitiy effects of being observed.

Codding, R. S., Livanis, A., Pace, G. M., & Vaca, L. (2008). Using Performance Feedback to Improve Treatment Integrity of Classwide Behavior Plans: An Investigation of Observer Reactivity. Journal of Applied Behavior Analysis, 41(3), 417-422.

Barriers to Implementing Classroom Management and Behavior Support Plans: An Exploratory Investigation.

This study examines obstacles encountered by 33 educators along with suggested interventions to overcome impediments to effective delivery of classroom management interventions or behavior support plans. Having the right classroom management plan isn’t enough if you can’t deliver the strategies to the students in the classroom.

Collier‐Meek, M. A., Sanetti, L. M., & Boyle, A. M. (2019). Barriers to implementing classroom management and behavior support plans: An exploratory investigation. Psychology in the Schools, 56(1), 5-17.

The effects of video modeling on staff implementation of a problem-solving intervention with adults with developmental disabilities.

This study evaluated the effects of video modeling on staff implementation of a problem solving intervention.

Collins, S., Higbee, T. S., & Salzberg, C. L. (2009). The effects of video modeling on staff implementation of a problem-solving intervention with adults with developmental disabilities. Journal of applied behavior analysis, 42(4), 849-854.

Test Driving Interventions to Increase Treatment Integrity and Student Outcomes.

This study evaluated the effects of allowing teachers to “test drive” interventions and then select the intervention they most preferred. The result was an increase in treatment integrity.

Dart, E. H., Cook, C. R., Collins, T. A., Gresham, F. M., & Chenier, J. S. (2012). Test Driving Interventions to Increase Treatment Integrity and Student Outcomes. School Psychology Review, 41(4), 467-481.

Treatment Integrity: Fundamental to Education Reform

To produce better outcomes for students two things are necessary: (1) effective, scientifically supported interventions (2) those interventions implemented with high integrity. Typically, much greater attention has been given to identifying effective practices. This review focuses on features of high quality implementation.

Detrich, R. (2014). Treatment integrity: Fundamental to education reform. Journal of Cognitive Education and Psychology, 13(2), 258-271.

Treatment Integrity: A Fundamental Unit of Sustainable Educational Programs.

Reform efforts tend to come and go very quickly in education. This paper makes the argument that the sustainability of programs is closely related to how well those programs are implemented.

Detrich, R., Keyworth, R. & States, J. (2010). Treatment Integrity: A Fundamental Unit of Sustainable Educational Programs. Journal of Evidence-Based Practices for Schools, 11(1), 4-29.

Treatment Integrity in the Problem Solving Process

The usual approach to determining if an intervention is effective for a student is to review student outcome data; however, this is only part of the task. Student data can only be understood if we know something about how well the intervention was implemented. Student data without treatment integrity data are largely meaningless because without knowing how well an intervention has been implemented, no judgments can be made about the effectiveness of the intervention. Poor outcomes can be a function of an ineffective intervention or poor implementation of the intervention. Without treatment integrity data, there is a risk that an intervention will be judged as ineffective when, in fact, the quality of implementation was so inadequate that it would be unreasonable to expect positive outcomes.

Detrich, R., States, J. & Keyworth, R. (2017). Treatment Integrity in the Problem Solving Process. Oakland, Ca. The Wing Institute.

A comparison of performance feedback procedures on teachers' treatment implementation integrity and students' inappropriate behavior in special education classrooms.

This study comared the effects of goal setting about student performance and feedback about student performance with daily written feedback about student performance, feedback about accuracy of implementation, and cancelling meetings if integrity criterion was met.

DiGennaro, F. D., Martens, B. K., & Kleinmann, A. E. (2007). A comparison of performance feedback procedures on teachers' treatment implementation integrity and students' inappropriate behavior in special education classrooms. Journal of Applied Behavior Analysis, 40(3), 447-461.

Increasing Treatment Integrity Through Negative Reinforcement: Effects on Teacher and Student Behavior

This study evaluated the impact of allowing teachers to miss coaching meetings if their treatment integrity scores met or exceeded criterion.

DiGennaro, F. D., Martens, B. K., & McIntyre, L. L. (2005). Increasing Treatment Integrity Through Negative Reinforcement: Effects on Teacher and Student Behavior. School Psychology Review, 34(2), 220-231.

Effects of video modeling on treatment integrity of behavioral interventions.

This study evaluated the effects of video modeling on how well teachers implemented interventions. There was an increase in integrity but it remained variable. More stable patterns of implementation were observed when teachers were given feedback about their peroformance.

Digennaro-Reed, F. D., Codding, R., Catania, C. N., & Maguire, H. (2010). Effects of video modeling on treatment integrity of behavioral interventions. Journal of Applied Behavior Analysis, 43(2), 291-295.

Effects of public feedback during RTI team meetings on teacher implementation integrity and student academic performance.

This study evaluated the impact of public feedback in RtI team meetings on the quality of implementation. Feedback improved poor implementation and maintained high level implementation.

Duhon, G. J., Mesmer, E. M., Gregerson, L., & Witt, J. C. (2009). Effects of public feedback during RTI team meetings on teacher implementation integrity and student academic performance. Journal of School Psychology, 47(1), 19-37.

Teacher and Shared Decision Making: The Cost and Benefits of Involvement

The authors conducted a study of teachers' perceptions of the potential costs and benefits of involvement in school decision making. The teachers interviewed rated the potential costs of decision making involvement as low and the potential benefits as high. Nevertheless, many were hesitant to become involved because they saw little possibility that their involvement would actually make a difference.

Duke, D. L., Showers, B. K., & Imber, M. (1980). Teachers and shared decision making: The costs and benefits of involvement. Educational Administration Quarterly, 16(1), 93-106.

Successful Prevention Programs for Children and Adolescents

This book presents a wide variety of exemplary programs addressing behavioral and social problems, school failure, drug use, injuries, child abuse, physical health, and other critical issues.

Durlak, J. A. (1997). Successful prevention programs for children and adolescents. Springer Science & Business Media.

Planning, Implementing and Evaluating Evidence-Based Interventions

This section includes tools and resources that can help school leaders, teachers, and other stakeholders be more strategic in their decision-making about planning, implementing, and evaluating evidence-based interventions to improve the conditions for learning and facilitate positive student outcomes.

Elliott, S. N., Witt, J. C., & Kratochwill, T. R. (1991). Selecting, implementing, and evaluating classroom interventions. Interventions for achievement and behavior problems, 99-135.

Forging Strategic Partnerships to Advance Mental Health

Science and Practice for Vulnerable Children

The purpose of this article is to present a conceptual framework for advancing mental health science and practice for vulnerable children that is in accord with the Surgeon General’s priorities for change.

Fantuzzo, J., McWayne, C., & Bulotsky, R. (2003). Forging strategic partnerships to advance mental health science and practice for vulnerable children. School Psychology Review, 32(1), 17-37.

Implementation Research: A Synthesis of the Literature

This is a comprehensive literature review of the topic of Implementation examining all stages beginning with adoption and ending with sustainability.

Fixsen, D. L., Naoom, S. F., Blase, K. A., & Friedman, R. M. (2005). Implementation research: A synthesis of the literature.

Sustaining fidelity following the nationwide PMTO implementation in Norway

This paper describes the scaling up and dissemination of a partent training program in Norway while maintaining fidelity of implementation.

Forgatch, M. S., & DeGarmo, D. S. (2011). Sustaining fidelity following the nationwide PMTO implementation in Norway. Prevention Science, 12(3), 235-246. Retrieved from http://www.ncbi.nlm.nih.gov/pmc/articles/PMC3153633

Enhancing third-grade student' mathematical problem solving with self-regulated learning strategies.

The authors assessed the contribution of self-regulated learning strategies (SRL), when combined with problem-solving transfer instruction (L. S. Fuchs et al., 2003), on 3rd-graders' mathematical problem solving. SRL incorporated goal setting and self-evaluation.

Fuchs, L. S., Fuchs, D., Prentice, K., Burch, M., Hamlett, C. L., Owen, R., & Schroeter, K. (2003). Enhancing third-grade student'mathematical problem solving with self-regulated learning strategies. Journal of educational psychology, 95(2), 306.

Establishing Treatment Fidelity in Evidence-Based Parent Training Programs for Externalizing Disorders in Children and Adolescents.

This review evaluated methods for improving treatment integrity based on the National Institutes of Health Treatment Fidelity Workgroup. Strategies related to treatment design produced the highest levels of treatment integrity. Training and enactment of treatment skills resulted in the lowest level of treatment integrity across the 65 reviewed studies.

Garbacz, L., Brown, D., Spee, G., Polo, A., & Budd, K. (2014). Establishing Treatment Fidelity in Evidence-Based Parent Training Programs for Externalizing Disorders in Children and Adolescents. Clinical Child & Family Psychology Review, 17(3).

Strategies for Effective Classroom Coaching

This article aimed to present frameworks and practices coaches can use with classroom teachers to facilitate the implementation of evidence-based interventions in schools.

Garbacz, S. A., Lannie, A. L., Jeffrey-Pearsall, J. L., & Truckenmiller, A. J. (2015). Strategies for effective classroom coaching. Preventing School Failure: Alternative Education for Children and Youth, 59(4), 263-273.

Behavior analysis in education: Focus on measurably superior instruction.

This book was written to disseminate measurably superior instructional strategies to those

interested in advancing sound, pedagogically effective, field-tested educational practices

Gardner III, R. E., Sainato, D. M., Cooper, J. O., Heron, T. E., Heward, W. L., Eshleman, J. W., & Grossi, T. A. (1994). Behavior analysis in education: Focus on measurably superior instruction. In The chapters in this volume are revised from papers presented at the conference" Behavior Analysis in Education: Focus on Measurably Superior Instruction," sponsored by the Faculty in Applied Behavior Analysis, Ohio State U, Sep 1992.. Thomson Brooks/Cole Publishing Co.

The impact of two professional development interventions on early reading instruction and achievement

To help states and districts make informed decisions about the PD they implement to improve reading instruction, the U.S. Department of Education commissioned the Early Reading PD Interventions Study to examine the impact of two research-based PD interventions for reading instruction: (1) a content-focused teacher institute series that began in the summer and continued through much of the school year (treatment A) and (2) the same institute series plus in-school coaching (treatment B).

Garet, M. S., Cronen, S., Eaton, M., Kurki, A., Ludwig, M., Jones, W., ... Zhu, P. (2008). The impact of two professional development interventions on early reading instruction and achievement. NCEE 2008-4030. Washington, DC: National Center for Education Evaluation and Regional Assistance.

Principal Pipeline: A Feasible, Affordable, and Effective Way for Districts to Improve Schools.

The Rand Corporation just released its report evaluating the impact of the Principal Pipeline Initiative (PPI),a project supported by the Wallace Foundation to create and implement a strategic process for school leadership talent management. This report documents what the PPI districts were able to accomplish, describing the implementation of the PPI and its effects on student achievement, other school outcomes, and principal retention.

Gates, Susan M., Matthew D. Baird, Benjamin K. Master, and Emilio R. Chavez-Herrerias, Principal Pipelines: A Feasible, Affordable, and Effective Way for Districts to Improve Schools, Santa Monica, Calif.: RAND Corporation, RR-2666-WF, 2019.

Supporting teacher use of interventions: effects of response dependent performance feedback on teacher implementation of a math intervention

This study examined general education teachers’ implementation of a peer tutoring intervention for five elementary students referred for consultation and intervention due to academic concerns. Treatment integrity was assessed via permanent products produced by the intervention.

Gilbertson, D., Witt, J. C., Singletary, L. L., & VanDerHeyden, A. (2007). Supporting teacher use of interventions: Effects of response dependent performance feedback on teacher implementation of a math intervention. Journal of Behavioral Education, 16(4), 311-326.

Timed Partner Reading and Text Discussion

This paper provides students with an opportunity to improve their reading comprehension and text-based discussion skills. The activity, which can be used with intermediate and advanced learners, is ideal for English language learners in content classes and is particularly useful for building foundational knowledge of a new topic.

Giovacchini, M. (2017). Timed Partner Reading and Text Discussion. In English Teaching Forum (Vol. 55, No. 1, pp. 36-39). US Department of State. Bureau of Educational and Cultural Affairs, Office of English Language Programs, SA-5, 2200 C Street NW 4th Floor, Washington, DC 20037.

The Importance and Decision-Making Utility of a Continuum of Fluency-Based Indicators of Foundational Reading Skills for Third-Grade High-Stakes Outcomes

In this article, we examine assessment and accountability in the context of a prevention-oriented assessment and intervention system designed to assess early reading progress formatively.

Good III, R. H., Simmons, D. C., & Kame'enui, E. J. (2001). The importance and decision-making utility of a continuum of fluency-based indicators of foundational reading skills for third-grade high-stakes outcomes. Scientific studies of reading, 5(3), 257-288.

Strategies for Improving Treatment Integrity in Organizational Consultation

Organizations house many individuals. Many of them are responsible implementing the same practice. If organizations are to meet their goal it is important for the organization have systems for assuring high levels of treatment integrity.

Gottfredson, D. C. (1993). Strategies for Improving Treatment Integrity in Organizational Consultation. Journal of Educational & Psychological Consultation, 4(3), 275.

The Prevention of Mental Disorders in School-Aged Children: Current State of the Field

In this review, the authors identify and describe 34 universal and targeted interventions that have demonstrated positive outcomes under rigorous evaluation.

Greenberg, M. T., Domitrovich, C., & Bumbarger, B. (2001). The prevention of mental disorders in school-aged children: Current state of the field. Prevention & treatment, 4(1), 1a.

Will the “principles of effectiveness” improve prevention practice? Early findings from a diffusion study

This study examines adoption and implementation of the US Department of Education's new policy, the `Principles of Effectiveness', from a diffusion of innovations theoretical framework. In this report, we evaluate adoption in relation to Principle 3: the requirement to select research-based programs.

Hallfors, D., & Godette, D. (2002). Will the “principles of effectiveness” improve prevention practice? Early findings from a diffusion study. Health Education Research, 17(4), 461–470.

At a loss for words: How a flawed idea is teaching millions of kids to be poor readers.

For decades, schools have taught children the strategies of struggling readers, using a theory about reading that cognitive scientists have repeatedly debunked. And many teachers and parents don't know there's anything wrong with it.

Hanford, E. (2019). At a loss for words: How a flawed idea is teaching millions of kids to be poor readers. APM Reports. https://www.apmreports.org/story/2019/08/22/whats-wrong-how-schools-teach-reading

Reinforcement schedule thinning following treatment with functional communication training

The authors evaluated four methods for increasing the practicality of functional communication training (FCT) by decreasing the frequency of reinforcement for alternative behavior.

Hanley, G. P., Iwata, B. A., & Thompson, R. H. (2001). Reinforcement schedule thinning following treatment with functional communication training. Journal of Applied Behavior Analysis, 34(1), 17-38.

Empowering students through speaking round tables

This paper will explain Round Tables, a practical, engaging alternative to the traditional classroom presentation. Round Tables are small groups of students, with each student given a specific speaking role to perform.

Harms, E., & Myers, C. (2013). Empowering students through speaking round tables. Language Education in Asia, 4(1), 39-59.

A Comparison of Choral and Individual Responding: A Review of the Literature

This article aimed to review the literature and examine and compare the effects of choral and individual responding. Results indicate a generally positive relationship between using choral responding versus individual responding on student variables such as active student responding, on-task behavior, and correct responses.

Haydon, T., Marsicano, R., & Scott, T. M. (2013). A comparison of choral and individual responding: A review of the literature. Preventing School Failure: Alternative Education for Children and Youth, 57(4), 181-188.

Three "low-tech" strategies for increasing the frequency of active student response during group instruction.

ASR [active student response] can be defined as an observable response made to an instructional antecedent / [compare ASR] to other measures of instructional time and student engagement / 3 benefits of increasing the frequency of ASR during instruction are discussed.

Heward, W. L. (1994). Three" low-tech" strategies for increasing the frequency of active student response during group instruction.

Using choral responding to increase active student response.

There are numerous practical strategies for increasing active student response during group instruction. One of these strategies, Choral Responding, is the subject of this article.

Heward, W. L., Courson, F. H., & Narayan, J. S. (1989). Using choral responding to increase active student response. Teaching Exceptional Children, 21(3), 72-75.

Learning from teacher observations: Challenges and opportunities posed by new teacher evaluation systems

This article discusses the current focus on using teacher observation instruments as part of new teacher evaluation systems being considered and implemented by states and districts.

Hill, H., & Grossman, P. (2013). Learning from teacher observations: Challenges and opportunities posed by new teacher evaluation systems. Harvard Educational Review, 83(2), 371-384.

Observational Assessment for Planning and Evaluating Educational Transitions: An Initial Analysis of Template Matching

Used a direct observation-based approach to identify behavioral conditions in sending (i.e., special education) and in receiving (i.e., regular education) classrooms and to identify targets for intervention that might facilitate mainstreaming of behavior-disordered (BD) children.

Hoier, T. S., McConnell, S., & Pallay, A. G. (1987). Observational assessment for planning and evaluating educational transitions: An initial analysis of template matching. Behavioral Assessment.

Teacher and student behaviors in the contexts of grade-level and instructional grouping

This study aimed to examine active instruction and engagement across elementary, middle, and high schools using a large database of direct classroom observations.

Hollo, A., & Hirn, R. G. (2015). Teacher and student behaviors in the contexts of grade-level and instructional grouping. Preventing School Failure: Alternative Education for Children and Youth, 59(1), 30-39.

Examining the evidence base for school-wide positive behavior support.

The purposes of this manuscript are to propose core features that may apply to any practice or set of practices that proposes to be evidence-based in relation to School-wide Positive Behavior Support (SWPBS).

Horner, R. H., Sugai, G., & Anderson, C. M. (2010). Examining the evidence base for school-wide positive behavior support. Focus on Exceptional Children, 42(8), 1.

The importance of contextual fit when implementing evidence-based interventions.

“Contextual fit” is based on the premise that the match between an intervention and local context affects both the quality of intervention implementation and whether the intervention actually produces the desired outcomes for children and families.

Horner, R., Blitz, C., & Ross, S. (2014). The importance of contextual fit when implementing evidence-based interventions. Washington, DC: U.S. Department of Health and Human Services, Office of the Assistant Secretary for Planning and Evaluation. https://aspe.hhs.gov/system/files/pdf/77066/ib_Contextual.pdf

IPEDS presents NCES postsecondary survey.

Through several postsecondary surveys, NCES collects information about where and how the aid is distributed and whether it had an impact on student outcomes. This brochure describes the NCES postsecondary survey.

IPEDS presents NCES postsecondary survey. (2018). National Center for Education Statistic, 2017136.

Four Domains for Rapid School Improvement: An Implementation Framework. The Center on School Turnaround.

This paper describes “how” to effectively implement lasting school improvement initiatives that maximize leadership, develop talent, amplify instructional transformation, and shift the culture.

Jackson, K., R., Fixsen, D., and Ward, C. (2018). Four Domains for Rapid School Improvement: An Implementation Framework. The Center on School Turnaround.

The mirage: Confronting the hard truth about our quest for teacher development

This piece describes the widely held perception among education leaders that we already know how to help teachers improve, and that we could achieve our goal of great teaching in far more classrooms if we just applied what we know more widely.

Jacob, A., & McGovern, K. (2015). The mirage: Confronting the hard truth about our quest for teacher development. Brooklyn, NY: TNTP. https://tntp.org/assets/documents/TNTP-Mirage_2015.pdf.

Restorative justice in Oakland schools. Implementation and impact: An effective strategy to reduce racially disproportionate discipline, suspensions, and improve academic outcomes.

This study examines the impact of The Whole School Restorative Justice Program (WSRJ). WSRJ utilizes a multi-tiered strategy. Tier 1 is regular classroom circles, Tier 2 is repair harm/conflict circles, and Tier 3 includes mediation, family group conferencing, and welcome/re-entry circles to initiate successful re-integration of students being released from juvenile detention centers.The key findings of this report show decreased problem behavior, improved school climate, and improved student achievement.

Jain, S., Bassey, H., Brown, M. A., & Kalra, P. (2014). Restorative justice in Oakland schools. Implementation and impact: An effective strategy to reduce racially disproportionate discipline, suspensions, and improve academic outcomes.

The Effects of Feedback Interventions on Performance: A Historical Review, a Meta-Analysis, and a Preliminary Feedback Intervention Theory

The authors proposed a preliminary FI theory (FIT) and tested it with moderator analyses. The central assumption of FIT is that FIs change the locus of attention among 3 general and hierarchically organized levels of control: task learning, task motivation, and meta-tasks (including self-related) processes.

Kluger, A. N., & DeNisi, A. (1996). The effects of feedback interventions on performance: A historical review, a meta-analysis, and a preliminary feedback intervention theory. Psychological bulletin, 119(2), 254.

Focus on teaching: Using video for high-impact instruction

This book examines the use of video recording to to improve teacher performance. The book shows how every classroom can easily benefit from setting up a camera and hitting “record”.

Knight, J. (2013). Focus on teaching: Using video for high-impact instruction. (Pages 8-14). Thousand Oaks, CA: Corwin.

Using Guided Notes to Enhance Instruction for All Students

The purpose of this article is to provide teachers with several suggestions for creating and using guided notes to enhance other effective teaching methods, support students’ studying, and promote higher order thinking.

Konrad, M., Joseph, L. M., & Itoi, M. (2011). Using guided notes to enhance instruction for all students. Intervention in school and clinic, 46(3), 131-140.

Dubious “Mozart effect” remains music to many Americans’ ears.

Scientists have discredited claims that listening to classical music enhances intelligence, yet this so-called "Mozart Effect" has actually exploded in popularity over the years.

Krakovsky, M. (2005). Dubious “Mozart effect” remains music to many Americans’ ears. Stanford, CA: Stanford Report

The Reading Wars

An old disagreement over how to teach children to read -- whole-language versus phonics -- has re-emerged in California, in a new form. Previously confined largely to education, the dispute is now a full-fledged political issue there, and is likely to become one in other states.

Lemann, N. (1997). The reading wars. The Atlantic Monthly, 280(5), 128–133.

Dear Colleagues Letter: Resource Comparability

Dear Colleagues Letter: Resource Comparability is a letter written by United States Department of Education. This letter was meant to call people attention to disparities that persist in access to educational resources, and to help address those disparities and comply with the legal obligation to provide students with equal access to these resources without regard to race, color, or national origin (This letter addresses legal obligations under Title VI of the Civil Rights Act of 1964, Title VI). This letter builds on the prior work shared by the U.S. Department of Education on this critical topic.

Lhamon, C. E. (2014). Dear colleague letter: Resource comparability. Washington, DC: US Department of Education, Office for Civil Rights.

Do Pay-for-Grades Programs Encourage Student Academic Cheating? Evidence from a Randomized Experiment

Using a randomized control trial in 11 Chinese primary schools, we studied the effects of pay-for-grades programs on academic cheating. We randomly assigned 82 classrooms into treatment or control conditions, and used a statistical algorithm to determine the occurrence of cheating.

Li, T., & Zhou, Y. (2019). Do Pay-for-Grades Programs Encourage Student Academic Cheating? Evidence from a Randomized Experiment. Frontiers of Education in China, 14(1), 117-137.

Common Core’s Major Political Challenges for the Remainder of 2016

This article elaborate on a topic "What to expect in Common Core immediate political future". Here, they discuss four key challenges that CCSS will face between now and the end of the year. Common Core is now several years into implementation. Supporters have had a difficult time persuading skeptics that any positive results have occurred. The best evidence has been mixed on that question. The political challenges that Common Core faces the remainder of this year may determine whether it survives.

Four Classwide Peer Tutoring Models: Similarities, Differences, and Implications for Research and Practice

In this special issue, this Journal introduce a fourth peer teaching model, Classwide Student Tutoring Teams. This journal also provide a comprehensive analysis of common and divergent programmatic components across all four models and discuss the implications of this analysis for researchers and practitioners alike.

Maheady, L., Mallette, B., & Harper, G. F. (2006). Four classwide peer tutoring models: Similarities, differences, and implications for research and practice. Reading & Writing Quarterly, 22(1), 65-89.

The Association between Teachers’ Use of Formative Assessment Practices and Students’ Use of Self-Regulated Learning Strategies

Many schools and teachers are striving to create more dynamic classroom learning environments that encourage students to think critically and manage their own learning in preparation for college and careers. Some observers view formative assessment as a means to this end.

Makkonen, R., & Jaquet, K. (2020). The Association between Teachers' Use of Formative Assessment Practices and Students' Use of Self-Regulated Learning Strategies. REL 2021-041. Regional Educational Laboratory West.

Effects of The Cloze Procedure on Good and Poor Readers' Comprehension.

The effects of a cloze procedure developed from transfer feature theory of processing in reading on immediate and delayed recall of good and poor readers were studied

Mcgee, L. M. (1981). Effects of the Cloze Procedure on Good and Poor Readers' Comprehension. Journal of Reading Behavior, 13(2), 145-156.

How to reverse the assault on science.

We should stop being so embarrassed by uncertainty and embrace it as a strength rather than a weakness of scientific reasoning

McIntyre, L., (2019, May 22). How to reverse the assault on science. Scientific American. https://blogs.scientificamerican.com/observations/how-to-reverse-the-assault-on-science1/

Whole language lives on: The illusion of “balanced” reading instruction.

This position paper contends that the whole language approach to reading instruction has been disproved by research and evaluation but still pervades textbooks for teachers, instructional materials for classroom use, some states' language-arts standards and other policy documents, teacher licensing requirements and preparation programs, and the professional context in which teachers work.

Moats, L. C. (2000). Whole language lives on: The illusion of “balanced” reading instruction. Washington, DC: DIANE Publishing.

Should U.S. Students Do More Math Practice and Drilling?

Should U.S. students be doing more math practice and drilling in their classrooms? That’s the suggestion from last week’s most emailed New York Times op-ed. The op-ed’s author argued that more practice and drilling could help narrow math achievement gaps. These gaps occur in the U.S. by the primary grades.

Morgan, P. L. (2018). Should U.S. students do more math practice and drilling? Psychology Today.Retrieved from https://www.psychologytoday.com/us/blog/children-who-struggle/201808/should-us-students-do-more-math-practice-and-drilling

A systematic review of single-case research on video analysis as professional development for special educators.

This meta-analysis reports on the overall effectiveness of video analysis when used with special educators, as well as on moderator analyses related to participant and instructional characteristics.

Morin, K. L., Ganz, J. B., Vannest, K. J., Haas, A. N., Nagro, S. A., Peltier, C. J., … & Ura, S. K. (2019). A systematic review of single-case research on video analysis as professional development for special educators. The Journal of Special Education, 53(1), 3-14.

Increasing intervention implementation in general education following consultation: A comparison of two follow-up strategies.

This study compared the effects of discussing issues of implementation challenges and performance feedback on increasing the integrity of implementation. Performance feedback was more effective than discussion in increasing integrity.

Noell, G. H., & Witt, J. C. (2000). Increasing intervention implementation in general education following consultation: A comparison of two follow-up strategies. Journal of Applied Behavior Analysis, 33(3), 271.

Equality and Quality in U.S. Education: Systemic Problems, Systemic Solutions. Policy Brief

This paper enters debate about how U.S. schools might address long-standing disparities in educational and economic opportunities while improving the educational outcomes for all students. with a vision and an argument for realizing that vision, based on lessons learned from 60 years of education research and reform efforts. The central points covered draw on a much more extensive treatment of these issues published in 2015. The aim is to spark fruitful discussion among educators, policymakers, and researchers.

O'Day, J. A., & Smith, M. S. (2016). Equality and Quality in US Education: Systemic Problems, Systemic Solutions. Policy Brief. Education Policy Center at American Institutes for Research.

Why Trust Science?

Naomi Oreskes offers a bold and compelling defense of science, revealing why the social character of scientific

knowledge is its greatest strength—and the greatest reason we can trust it.

Oreskes, N. (2019). Why trust science? Princeton, NJ: Princeton University Press.

Evidence-Based Classroom Behaviour Management Strategies

This paper reviews a range of evidence-based strategies for application by teachers to reduce disruptive and challenging behaviours in their classrooms.

Parsonson, B. S. (2012). Evidence-Based Classroom Behaviour Management Strategies. Kairaranga, 13(1), 16-23.

Helping students help themselves: Generative learning strategies improve middle school students’ self-regulation in a cognitive tutor

The current study investigated whether prompting students to engage in generative learning strategies improves students' subsequent judgments of learning and self-regulation. Seventy- eight middle school students in a pre-algebra class completed worksheets in between problem-solving sessions in a computer-based cognitive tutor.

Pilegard, C., & Fiorella, L. (2016). Helping students help themselves: Generative learning strategies improve middle school students’ self-regulation in a cognitive tutor. Computers in Human Behavior, 65, 121–126.

Music and spatial task performance.

This research paper reports on testing the hypothesis that music and spatial task performance are causally related. Two complementary studies are presented that replicate and explore previous findings.

Rauscher, F. H., Shaw, G. L., & Ky, C. N. (1993). Music and spatial task performance. Nature, 365(6447), 611–611.

Practical statistics for educators.

The focus of the book is on essential concepts in educational statistics, understanding when to use various statistical tests, and how to interpret results. This book introduces educational students and practitioners to the use of statistics in education and basic concepts in statistics are explained in clear language.

Ravid, R. (2019). Practical statistics for educators. Rowman & Littlefield Publishers.

Cloze Procedure and the Teaching of Reading

Many teachers in both first language and second language classrooms adopt the cloze procedure. The cloze procedure is here defined as those rational deletions made by the teacher with the hope of teaching something in reading. The fact that the cloze procedure "works", has a "mechanical simplicity", "is simple and straightforward", and "does not involve experts or difficult administrative judgments" (Taylor, 1953: 432.) has a great deal of appeal for the experienced and novice teacher alike. However, many teachers do not realize that the cloze procedure is very deceptive in its simplicity. The undifferentiated use of the cloze procedure in a first or second language classroom in the hopes of some kind of reading improvement is very dubious (Jongsma, 1980: 15) and is termed the 'shotgun approach' (Jongsma, 1980: 25). Teachers must understand why the cloze procedure can be used to teach reading. Its effective classroom implementation depends on careful text selection, preparation and presentation.

Raymond, P. (1988). Cloze procedure in the teaching of reading. TESL Canada Journal, 6(1), 91–97.