Formative Assessment overview.pdf

Formative Assessment

States, J., Detrich, R. & Keyworth, R. (2017). Overview of Formative Assessment. Oakland, CA: The Wing Institute. http://www.winginstitute.org/student-formative-assessment.

For teachers, few skills are as important or powerful as formative assessment (also known as progress monitoring and rapid assessment). This process of frequent and ongoing feedback on the effects of instruction gives teachers insight on when and how to adjust instruction to maximize learning. The assessment data are used to verify student progress and act as indicators to adjust interventions when insufficient progress has been made or a particular concept has been mastered (VanDerHeyden, 2013). For the past 30 years, formative assessment has been found to be effective in typical classroom settings. The practice has shown power across student ages, treatment durations, and frequencies of measurement, as well as with students with special needs (Hattie, 2009).

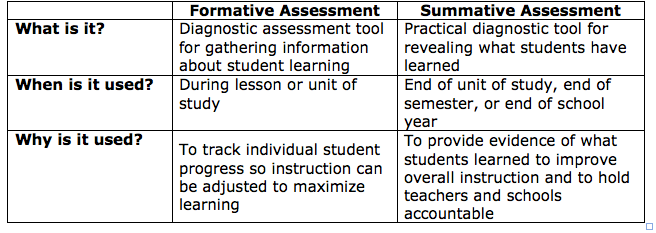

Another important assessment tool commonly used in schools that should not be confused with formative assessment is summative assessment. Formative assessment and summative assessment play important but very different roles in an effective model of education. Both are integral in gathering information necessary for maximizing student success, but they differ in important ways (see Figure 1).

Summative assessment evaluates the overall effectiveness of teaching at the end of a class, end of a semester, or end of the school year. This type of assessment is used to determine at a particular time what students know and do not know. It is most often associated with standardized tests such as state achievement assessments but are also commonly used by teachers to assess the overall progress of students in determining grades (Geiser & Santelices, 2007). Since the advent of No Child Left Behind, summative assessment has increasingly been used to hold schools and teachers accountable for student progress and its use is likely to continue under the Every Student Succeeds Act.

In contrast, formative assessment is a practical diagnostic tool for routinely determining student progress. Formative assessment allows teachers to quickly ascertain if individual students are progressing at acceptable rates and provides insight into when and how to modify and adapt lessons, with the goal of making sure all students are progressing satisfactorily.

Comparing Formative Assessment and Summative Assessment

Figure 1. Comparing two types of assessment

Both formative assessment and summative assessment are essential components of information gathering, but they should be used for the purposes for which they were designed.

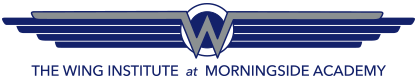

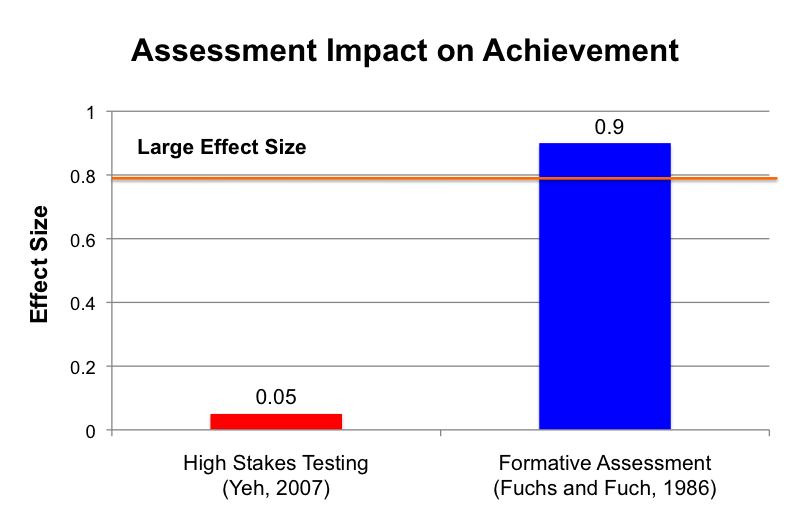

Figure 2 offers a data display examining the relative impact of formative assessment and summative assessment (the latter in the form of high-stakes testing). Research shows a clear advantage for formative assessment in improving student performance.

Figure 2. Comparison of formative assessment and summative assessment impact on student achievement

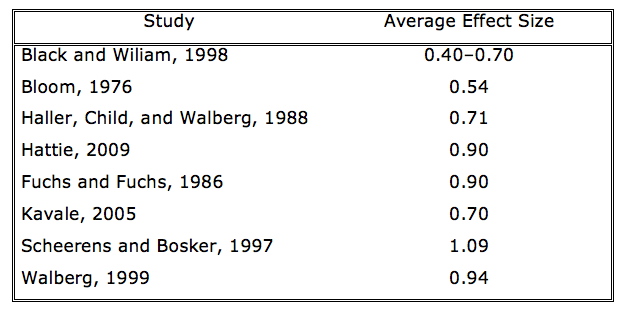

Research consistently lists formative assessment in the top tier of variables that make a difference in improving student achievement (Hattie, 2009; Marzano, 1998). In 1986, Fuchs and Fuchs conducted the first comprehensive quantitative examination of formative assessment. They found that it had an impressive 0.90 effect size on student achievement. Figure 3 provides the effect size of formative assessment, gleaned from multiple studies over more than 40 years of research on the topic.

Figure 3: Effect size of formative assessment

At its core, formative assessment uses feedback to improve student performance. It furnishes teachers with indicators of each student’s progress, which can be used to determine when and how to adjust instruction to maximize learning. Feedback is ranked at or near the top of practices known to significantly raise student achievement (Kluger & DeNisi, 1996; Marzano, Pickering, & Pollock, 2001; Walberg, 1999). It is not surprising that data-based decision-making approaches such as response to intervention (RtI) and positive behavior interventions and supports (PBIS) depend heavily on formative assessment.

Another important feature of well-designed formative assessment is the incorporation of grade-level norms into the assessment process. Grade-level norms are a valuable yardstick enabling teachers to more efficiently compare each student’s performance against normed standards (McLaughlin & Shepard, 1995). In addition to allowing teachers to determine whether a student met or missed a target, grade-level norms offer teachers a clear picture of whether students are meeting important goals in the standards and quickly identify struggling students who need more intensive support.

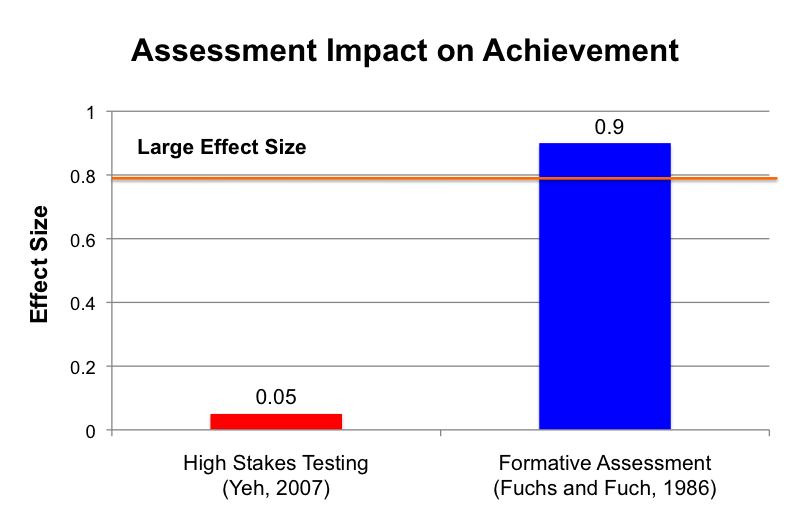

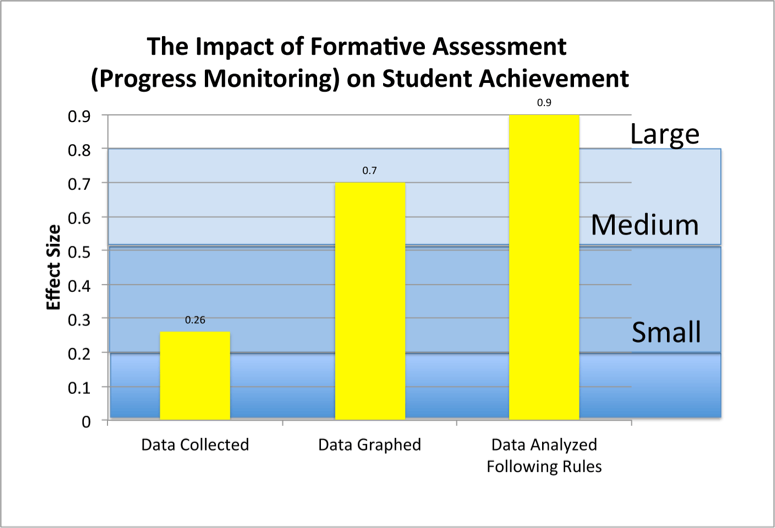

Fuchs and Fuchs conducted the first extensive quantitative examination of formative assessment in 1986. This meta-analysis added considerably to the knowledge base by identifying the essential practice elements that increase the impact of ongoing formative assessment. The impact is equivalent to raising student achievement in an average nation such as the United States to that of the top five nations (Black & Wiliam, 1998). As can be seen in Figure 4, Fuchs and Fuchs reported that the impact of formative assessment is significantly enhanced by the cumulative effect of three practice elements. The practice begins with collecting data weekly (0.26 effect size). When teachers interact with the collected data by graphing it, the effect size increases to 0.70. Adding decision rules to aid teachers in analyzing the graphed data increases the effect size to 0.90.

Figure 4: Impact of formative assessment on student achievement

Why Is Formative Assessment Important?

Much has been said about the importance of selecting evidence-based practices for use in schools. One of the most common failures in building an evidence-based culture is overreliance on selecting interventions and underreliance on managing the interventions (VanDerHeyden & Tilly, 2010). Adopting an evidence-based practice, although an important first step, does not guarantee that the practice will produce the desired results. Even if every action leading up to implementation is flawless, if the intervention is not implemented as designed, it will likely fail and learning will not occur (Detrich, 2014). A growing body of research is now available to help teachers identify and overcome obstacles to implementing practices accurately (Fixsen, Naoom, Blase, Friedman, & Wallace, 2005; Witt, Noell, LaFleur, & Mortenson, 1997). Formative assessment and treatment integrity checks constitute the basic tool kit enabling schools to avoid or quickly remedy failures during implementation.

The fact is, not all practices produce positive outcomes for all students. In medicine, all patients do not respond positively to a given treatment. The same holds true in education: Not all students respond identically to an education intervention. Given the possibility that even good practices may produce poor outcomes, it is incumbent on educators to monitor student progress frequently. Formal and routine sampling of student performance significantly reduces the likelihood that struggling students will fall through the cracks.

Common informal sampling methods such as having students answer questions by raising their hands aren’t sufficient. It is imperative that teachers have a clear understanding of each student’s progress toward mastery of standards. This is important not just for the lesson at hand but also for future success. A systematically planned curriculum builds on learned skills across a school year. Skills learned in one assignment are very often the foundation skills needed for success in subsequent lessons. Today’s failure may increase the possibility of failure tomorrow. For example, students who fall behind in reading by the third grade have been found to have poorer academic success, including a significantly greater likelihood of dropping out of high school (Celio, & Harvey, 2005; Lesnick, Goerge, Smithgall, & Gwynne, 2010).

It is only through ongoing monitoring that problems can be identified early and adjustments made to teaching strategies to ensure greater success for all students. In this way, formative assessment guides teachers on when and how to improve instructional delivery and make effective adjustments to the curriculum. This is necessary for helping struggling students as well as adapting instruction for gifted students.

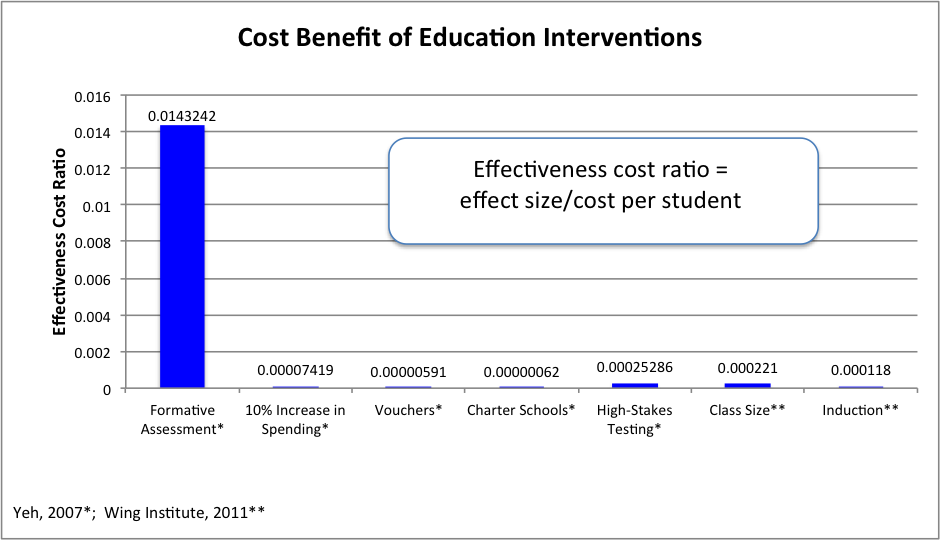

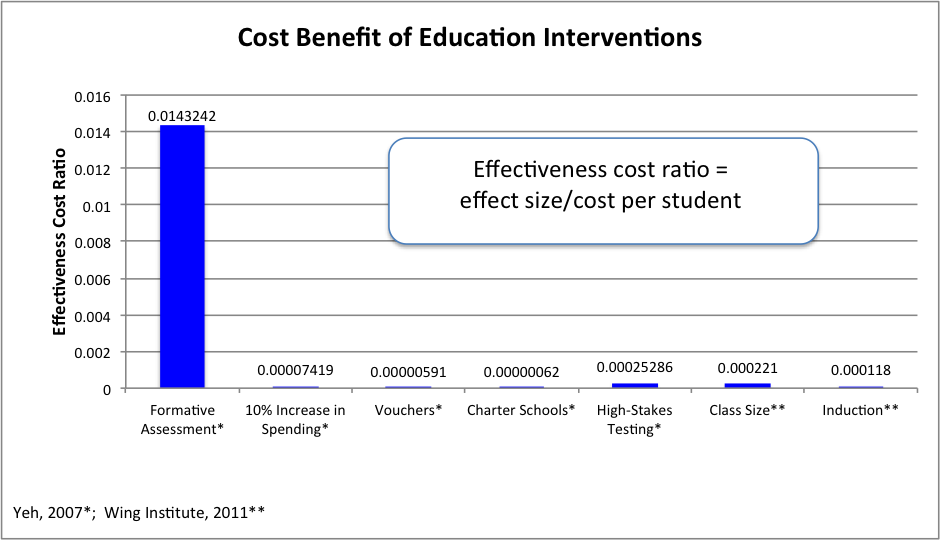

In addition to formative assessment’s notable impact on achievement is its impressive return on investment compared with other popular reform practices. In a cost-effectiveness analysis of frequently adopted education interventions, Yeh (2007) found that formative assessment (which he referred to as rapid assessment) outperformed other common reform practices. He found the advantage for formative assessment striking compared with a 10% increase in spending, vouchers, charter schools, or high-stakes testing (see Figure 5).

Figure 5: Return on investment of common education interventions

The Figure 5 data display compares Yeh’s 2007 and The Wing Institute analysis cost-effectiveness analysis of formative assessment with six common structural interventions.

Yeh compared the cost and outcomes of alternative practices to aid education decision makers in selecting economical and productive choices (Levin, 1988; Levin & McEwan, 2002). Educational cost-effectiveness analyses are designed to assess key educational outcomes, such as student achievement relative to the monetary resources needed to achieve worthy results. Cost-effectiveness analyses provide a practical and systematic architecture that permits educators to more effectively compare the real impact of interventions.

Although the structural interventions identified in Figure 5 are designed to address an array of differing issues impacting schools, a fair comparison can be made because all the interventions aim to improve student achievement. In the end, decision makers need to know which approaches produce the greatest benefit for the dollars invested. A given practice may be very effective, but if it costs more than the resources available for implementation, the practice is of little use to the average school.

Summary

It is clear from years of rigorous research that formative assessment produces important results. It is also true that ongoing assessment carried out through the school year is necessary for teachers to grasp when and how to adjust instruction and curriculum to meet the various needs of struggling students as well as gifted students. Finally, cost-effectiveness research reveals that formative assessment is not only effective, but one of the most cost-effective interventions available to schools for boosting student performance.

Citations

Black, P., & Wiliam, D. (1998). Assessment and classroom learning. Assessment in Education: Principles, Policy & Practice, 5(1), 7–74.

Bloom, B. S. (1976). Human characteristics and school learning. New York, NY: McGraw-Hill.

Celio, M. B., & Harvey, J. (2005). Buried treasure: Developing a management guide from mountains of school data. Seattle, WA: University of Washington, Center on Reinventing Public Education.

Detrich, R. (2014). Treatment integrity: Fundamental to education reform. Journal of Cognitive Education and Psychology, 13(2), 258–271.

Fixsen, D. L., Naoom, S. F., Blase, K. A., Friedman, R. M., & Wallace, F. (2005). Implementation research: A synthesis of the literature (FMHI Publication No. 231). Tampa, FL: University of South Florida, Louis de la Parte Florida Mental Health Institute, the National Implementation Research Network.

Fuchs, L. S. & Fuchs, D. (1986). Effects of systematic formative evaluation: A meta-analysis. Exceptional Children, 53(3), 199–208.

Geiser, S., & Santelices, M. V. (2007). Validity of high-school grades in predicting student success beyond the freshman year: High-school record vs. standardized tests as indicators of four-year college outcomes (Research and Occasional Paper Series CSHE. 6.07). Berkeley, CA: University of California, Berkeley, Center for Studies in Higher Education.

Haller, E. P., Child, D. A., & Walberg, H. J. (1988). Can comprehension be taught? A quantitative synthesis of “metacognitive” studies. Educational Researcher, 17(9), 5–8.

Hattie, J. (2009). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. New York, NY: Routledge.

Kavale, K. A. (2005). Identifying specific learning disability: Is responsiveness to intervention the answer? Journal of Learning Disabilities, 38(6), 553–562.

Kluger, A. N., & DeNisi, A. S. (1996). The effects of feedback interventions on performance: A historical review, a meta-analysis, and a preliminary feedback intervention theory. Psychological Bulletin, 119(2), 254–284.

Lesnick, J., Goerge, R., Smithgall, C., & Gwynne, J. (2010). Reading on grade level in third grade: How is it related to high school performance and college enrollment? Chicago, IL: Chapin Hall at the University of Chicago, 1, 12.

Levin, H. M. (1988). Cost-effectiveness and educational policy. Educational Evaluation and Policy Analysis, 10(1), 51–69.

Levin, H. M., & McEwan, P. J., eds. (2002). Cost-effectiveness and educational policy. Larchmont, NY: Eye on Education.

Marzano, R. J. (1998). A theory-based meta-analysis of research on instruction. Aurora, CO: Mid-Continent Regional Educational Laboratory.

Marzano, R. J., Pickering, D. J., & Pollock, J. E. (2001). Classroom instruction that works: Research-based strategies for increasing student achievement. Alexandria, VA: Association for Supervision and Curriculum Development.

McLaughlin, M. W., & Shepard, L. A. (1995). Improving education through standards-based reform. A report by the National Academy of Education Panel on Standards-Based Education Reform. Palo Alto, CA: Stanford University Press.

Scheerens, J., & Bosker, R. J. (1997). The foundations of educational effectiveness. Oxford, UK: Pergamon.

VanDerHeyden, A. (2013). Are we making the differences that matter in education? In R. Detrich, R. Keyworth, & J. States (Eds.), Advances in evidence-based education: Vol 3. Performance feedback: Using data to improve educator performance (pp. 119–138). Oakland, CA: The Wing Institute. http://www.winginstitute.org/uploads/docs/Vol3Ch4.pdf

VanDerHeyden, A. M., & Tilly, W. D. (2010). Keeping RtI on track: How to identify, repair and prevent mistakes that derail implementation. Horsham, PA: LRP Publications.

Walberg H. J. (1999). Productive teaching. In H. C. Waxman & H. J. Walberg (Eds.), New directions for teaching, practice, and research (pp. 75–104). Berkeley, CA: McCutchen.

Witt, J. C., Noell, G. H., LaFleur, L. H., & Mortenson, B. P. (1997). Teacher use of interventions in general education settings: Measurement and analysis of the independent variable. Journal of Applied Behavior Analysis, 30(4), 693–696.

Yeh, S. S. (2007). The cost-effectiveness of five policies for improving student achievement. American Journal of Evaluation, 28(4), 416–436.

TITLE

SYNOPSIS

CITATION

LINK

Must try harder: Evaluating the role of effort in educational attainment.

This paper is based on the simple idea that students’ educational achievement is affected by the effort put in by those participating in the education process: schools, parents, and, of course, the students themselves.

Introduction: Proceedings from the Wing Institute’s Sixth Annual Summit on Evidence-Based Education: Performance Feedback: Using Data to Improve Educator Performance.

This book is compiled from the proceedings of the sixth summit entitled “Performance Feedback: Using Data to Improve Educator Performance.” The 2011 summit topic was selected to help answer the following question: What basic practice has the potential for the greatest impact on changing the behavior of students, teachers, and school administrative personnel?

States, J., Keyworth, R. & Detrich, R. (2013). Introduction: Proceedings from the Wing Institute’s Sixth Annual Summit on Evidence-Based Education: Performance Feedback: Using Data to Improve Educator Performance. In Education at the Crossroads: The State of Teacher Preparation (Vol. 3, pp. ix-xii). Oakland, CA: The Wing Institute.

Teachers' Subject Matter Knowledge as a Teacher Qualification: A Synthesis of the Quantitative Literature on Students' Mathematics Achievement

The aim of this paper is to examine a variety of features of research that might account for mixed findings of the relationship between teachers' subject matter knowledge and student achievement based on meta-analytic technique.

Ahn, S., & Choi, J. (2004). Teachers' Subject Matter Knowledge as a Teacher Qualification: A Synthesis of the Quantitative Literature on Students' Mathematics Achievement. Online Submission.

school interventions that work: targeted support for low-performing students

This report breaks out key steps in the school identification and improvement process, focusing on (1) a diagnosis of school needs; (2) a plan to improve schools; and (3) evidenced-based interventions that work.

Effects of Acceptability on Teachers' Implementation of Curriculum-Based Measurement and Student Achievement in Mathematics Computation

The authors investigated the hypothesis that treatment acceptability influences teachers' use of a formative evaluation system (curriculum-based measurement) and, relatedly, the amount of gain effected in math for their students.

Allinder, R. M., & Oats, R. G. (1997). Effects of acceptability on teachers' implementation of curriculum-based measurement and student achievement in mathematics computation. Remedial and Special Education, 18(2), 113-120.

Predictors of Elementary-aged Writing Fluency Growth in Response to a Performance Feedback Writing Intervention

The goal of the proposed study was to determine whether third-grade

students’ (n = 74) transcriptional skills and gender predicted their writing fluency growth in response to a performance feedback intervention.

Alvis, A. V. (2019). Predictors of Elementary-aged Students’ Writing Fluency Growth in Response to a Performance Feedback Writing Intervention

A systematic review and summarization of the recommendations and research surrounding Curriculum-Based Measurement of oral reading fluency (CBM-R) decision rules.

This article reviews the decision rules for curriculum based reading scores. It concluded the rules were most often based on expert opinion.

Ardoin, S. P., Christ, T. J., Morena, L. S., Cormier, D. C., & Klingbeil, D. A. (2013). A systematic review and summarization of the recommendations and research surrounding Curriculum-Based Measurement of oral reading fluency (CBM-R) decision rules. Journal of School Psychology, 51(1), 1-18.

High impact teaching for sport and exercise psychology educators.

Just as an athlete needs effective practice to be able to compete at high levels of performance, students benefit from formative practice and feedback to master skills and content in a course. At the most complex and challenging end of the spectrum of summative assessment techniques, the portfolio involves a collection of artifacts of student learning organized around a particular learning outcome.

Beers, M. J. (2020). Playing like you practice: Formative and summative techniques to assess student learning. High impact teaching for sport and exercise psychology educators, 92-102.

Assessment and classroom learning. Assessment in Education: principles, policy & practice

This is a review of the literature on classroom formative assessment. Several studies show firm evidence that innovations designed to strengthen the frequent feedback that students receive about their learning yield substantial learning gains.

Black, P., & Wiliam, D. (1998). Assessment and classroom learning. Assessment in Education: principles, policy & practice, 5(1), 7-74.

Human characteristics and school learning

This paper theorizes that variations in learning and the level of learning of students are determined by the students' learning histories and the quality of instruction they receive.

Bloom, B. (1976). Human characteristics and school learning. New York: McGraw-Hill.

Developing effective assessment in higher education: A practical guide

Research and experience tell us very forcefully about the importance of assessment in higher education. It shapes the experience of students and influences their behaviour more than the teaching they receive. The influence of assessment means that ‘there is more leverage to improve teaching through changing assessment than there is in changing anything else’.

Bloxham, S., & Boyd, P. (2007). Developing Effective Assessment in Higher Education: a practical guide.

Who Leaves? Teacher Attrition and Student Achievement

The goal of this paper is to estimate the extent to which there is differential attrition based on teachers' value-added to student achievement.

Boyd, D., Grossman, P., Lankford, H., Loeb, S., & Wyckoff, J. (2008). Who leaves? Teacher attrition and student achievement. Working Paper No. 14022. Cambridge, MA: National Bureau of Economic Research. Retrieved from https://www.nber.org/papers/w14022

Explaining the short careers of high-achieving teachers in schools with low-performing students

This paper examines New York City elementary school teachers’ decisions to stay in the same school, transfer to another school in the district, transfer to another district, or leave teaching in New York state during the first five years of their careers.

Boyd, D., Lankford, H., Loeb, S., & Wyckoff, J. (2005). Explaining the short careers of high-achieving teachers in schools with low-performing students. American Economic Review, 95(2), 166-171.

The narrowing gap in New York City teacher qualifications and its implications for student achievement in high-poverty schools.

By estimating the effect of teacher attributes using a value-added model, the analyses in this paper predict that observable qualifications of teachers resulted in average improved achievement for students in the poorest decile of schools of .03 standard deviations.

Boyd, D., Lankford, H., Loeb, S., Rockoff, J., & Wyckoff, J. (2008). The narrowing gap in New York City teacher qualifications and its implications for student achievement in high‐poverty schools. Journal of Policy Analysis and Management: The Journal of the Association for Public Policy Analysis and Management, 27(4), 793-818.

National board certification and teacher effectiveness: Evidence from a random assignment experiment

The National Board for Professional Teaching Standards (NBPTS) assesses teaching practice based on videos and essays submitted by teachers. They compared the performance of classrooms of elementary students in Los Angeles randomly assigned to NBPTS applicants and to comparison teachers.

Cantrell, S., Fullerton, J., Kane, T. J., & Staiger, D. O. (2008). National board certification and teacher effectiveness: Evidence from a random assignment experiment (No. w14608). National Bureau of Economic Research.

Buried Treasure: Developing a Management Guide From Mountains of School Data

This report provides a practical “management guide,” for an evidence-based key indicator data decision system for school districts and schools.

Celio, M. B., & Harvey, J. (2005). Buried Treasure: Developing A Management Guide From Mountains of School Data. Center on Reinventing Public Education.

A systematic review of peer-mediated interventions for children with autism spectrum disorder

Peer mediated intervention (PMI) is a promising practice used to increase social skills in children with autism spectrum disorder (ASD). PMIs engage typically developing peers as social models to improve social initiations, responses, and interactions.

Chang, Y. C., & Locke, J. (2016). A systematic review of peer-mediated interventions for children with autism spectrum disorder. Research in autism spectrum disorders, 27, 1-10.

Scientific practitioner: Assessing student performance: An important change is needed

A rationale and model for changing assessment efforts in schools from simple description to the integration of information from multiple sources for the purpose of designing interventions are described.

Christenson, S. L., & Ysseldyke, J. E. (1989). Scientific practitioner: Assessing student performance: An important change is needed. Journal of School Psychology, 27(4), 409-425.

Overview of Teacher Evaluation.

The purpose of this overview is to provide an understanding of the research base on professional development and its impact on student achievement, as well as offer recommendations for future teacher professional development.

Cleaver, S., Detrich, R., States, J. & Keyworth, R. (2020). Overview of Teacher Evaluation. Oakland, CA: The Wing Institute. https://www.winginstitute.org/quality-teachers-in-service

Assessing student’s participation in the classroom

We know that students should participate constructively in the classroom. In fact, most of us probably agree that a significant portion of a student’s grade should come from his or her participation. However, like many teachers, you may find it difficult to explain to students how you assess their participation.

Craven, J. A., & Hogan, T. (2001). Assessing participation in the classroom. Science Scope, 25(1), 36.

Varying Intervention Delivery in Response to Intervention: Confronting and Resolving Challenges With Measurement, Instruction, and Intensity

Daly, I. I. I., Edward J, Martens, B. K., Barnett, D., Witt, J. C., & Olson, S. C. (2007). Varying Intervention Delivery in Response to Intervention: Confronting and Resolving Challenges With Measurement, Instruction, and Intensity. School Psychology Review, 36(4), 562-581.

Developments in Curriculum-Based Measurement

Curriculum-based measurement is a type of formative assessment. It is used to screen for students who are not progressing and to identify how well students are responding to interventions.

Deno, S. L. (2003). Developments in Curriculum-Based Measurement. Journal of Special Education, 37(3), 184-192.

Developing Curriculum-Based Measurement Systems For Data Based Special Education Problem Solving

This article reviews the advantages of curriculum-based measurement as part of a data-based problem solving model.

Deno, S. L., & Fuchs, L. S. (1987). Developing Curriculum-Based Measurement Systems For Data Based Special Education Problem Solving. Focus on Exceptional Children, 19(8), 1-16.

Treatment Integrity: Fundamental to Education Reform

To produce better outcomes for students two things are necessary: (1) effective, scientifically supported interventions (2) those interventions implemented with high integrity. Typically, much greater attention has been given to identifying effective practices. This review focuses on features of high quality implementation.

Detrich, R. (2014). Treatment integrity: Fundamental to education reform. Journal of Cognitive Education and Psychology, 13(2), 258-271.

Mini-Series: Academic Enablers to Improve Student Performance: Considerations for Research and Practice

a mini-series from School Psychology Review about Academic Enablers to Improve Student Performance: Considerations for Research and Practice.

DiPerna, J., & Elliott, S. N. (2002). Promoting academic enablers to improve student performance: Considerations for research and practice [Special issue]. School Psychology Review, 31(3).

Primary and secondary prevention of behavior difficulties: Developing a data-informed problem-solving model to guide decision making at a school-wide level

This article describes using formative assessemnt as a foundational tool in a data-based problem solving approach to solving social behavior problems.

Ervin, R. A., Schaughency, E., Matthews, A., Goodman, S. D., & McGlinchey, M. T. (2007). Primary and secondary prevention of behavior difficulties: Developing a data-informed problem-solving model to guide decision making at a school-wide level. Psychology in the Schools, 44(1), 7-18.

Single‐track year‐round education for improving academic achievement in U.S. K‐12 schools: Results of a meta‐analysis

This systematic review synthesizes the findings from 30 studies thatcompared the performance of students at schools using single‐trackyear‐round calendars to the performance of students at schools usinga traditional calendar.

Fitzpatrick, D., & Burns, J. (2019). Single‐track year‐round education for improving academic achievement in US K‐12 schools: Results of a meta‐analysis. Campbell Systematic Reviews, 15(3), e1053.

Paradigmatic distinctions between instructionally relevant measurement models

This article compares and contrasts mastery level measures (grades) with curriculum-based measurement (global outcome measure).

Fuchs, L. S., & Deno, S. L. (1991). Paradigmatic distinctions between instructionally relevant measurement models. Exceptional Children, 57(6), 488-500.

Effects of Systematic Formative Evaluation: A Meta-Analysis

In this meta-analysis of studies that utilize formative assessment the authors report an effective size of .7.

Fuchs, L. S., & Fuchs, D. (1986). Effects of Systematic Formative Evaluation: A Meta-Analysis. Exceptional Children, 53(3), 199-208.

Effects of systematic formative evaluation: A meta-analysis

This meta-analysis investigated the effects of formative evaluation procedures on student achievement. The data source was 21 controlled studies, which generated 96 relevant effect sizes, with an average weighted effect size of .70. The magnitude of the effect of formative evaluation was associated with publication type, data-evaluation method, data display, and use of behavior modification. Implications for special education practice are discussed.

Fuchs, L. S., & Fuchs, D. (1986). Effects of systematic formative evaluation: A meta-analysis. Exceptional children, 53(3), 199-208.

Use of curriculum-based measurement in identifying students with disabilities

Curriculum-based measurement is recommended as an assessment method to identify students that require special education services.

Fuchs, L. S., & Fuchs, D. (1997). Use of curriculum-based measurement in identifying students with disabilities. Focus on Exceptional Children, 1.

Effects of Curriculum-Based Measurement on Teachers' Instructional Planning

This study examines the effect of formative assessment on teachers’ instructional planning.

Fuchs, L. S., Fuchs, D., & Stecker, P. M. (1989). Effects of Curriculum-Based Measurement on Teachers’ Instructional Planning. Journal of Learning Disabilities, 22(1).

Teaching methods and students’ academic performance

The objective of this study was to investigate the differential effectiveness of teaching methods on students’ academic performance. Using the inferential statistics course, students’ assessment test scores were derived from the internal class test prepared by the lecturer.

Ganyaupfu, E. M. (2013). Teaching methods and students’ academic performance. International Journal of Humanities and Social Science Invention, 2(9), 29-35.

Validity of High-School Grades in Predicting Student Success beyond the Freshman Year: High-School Record vs. Standardized Tests as Indicators of Four-Year College Outcomes

High-school grades are often viewed as an unreliable criterion for college admissions, owing to differences in grading standards across high schools, while standardized tests are seen as methodologically rigorous, providing a more uniform and valid yardstick for assessing student ability and achievement. The present study challenges that conventional view. The study finds that high-school grade point average (HSGPA) is consistently the best predictor not only of freshman grades in college, the outcome indicator most often employed in predictive-validity studies, but of four-year college outcomes as well.

Geiser, S., & Santelices, M. V. (2007). Validity of High-School Grades in Predicting Student Success beyond the Freshman Year: High-School Record vs. Standardized Tests as Indicators of Four-Year College Outcomes. Research & Occasional Paper Series: CSHE. 6.07. Center for studies in higher education.

The Importance and Decision-Making Utility of a Continuum of Fluency-Based Indicators of Foundational Reading Skills for Third-Grade High-Stakes Outcomes

In this article, we examine assessment and accountability in the context of a prevention-oriented assessment and intervention system designed to assess early reading progress formatively.

Good III, R. H., Simmons, D. C., & Kame'enui, E. J. (2001). The importance and decision-making utility of a continuum of fluency-based indicators of foundational reading skills for third-grade high-stakes outcomes. Scientific studies of reading, 5(3), 257-288.

The Importance and Decision-Making Utility of a Continuum of Fluency-Based Indicators of Foundational Reading Skills for Third-Grade High-Stakes Outcomes.

The authors contrast the functions of high stakes testing with prevention-based assessment. The authors also show the value of using formative assesment to estimate performance on high stakes tests.

Good, R.H., III., Simmons, D. C., & Kame’enui, E. J. (2001). The Importance and Decision-Making Utility of a Continuum of Fluency-Based Indicators of Foundational Reading Skills for Third-Grade High-Stakes Outcomes. Scientific Studies of Reading, 5(3), 257-288.

A Building-Based Case Study of Evidence-Based Literacy Practices: Implementation, Reading Behavior, and Growth in Reading Fluency, K--4.

Curriculum based measures were used to to evaluate student progress across multiple years following the introduction of selected evidence-based practices.

Greenwood, C. R., Tapia, Y., Abbott, M., & Walton, C. (2003). A Building-Based Case Study of Evidence-Based Literacy Practices: Implementation, Reading Behavior, and Growth in Reading Fluency, K--4. Journal of Special Education, 37(2), 95.

Can comprehension be taught? A quantitative synthesis of “metacognitive” studies

To assess the effect of “metacognitive” instruction on reading comprehension, 20 studies, with a total student population of 1,553, were compiled and quantitatively synthesized. In this compilation of studies, metacognitive instruction was found particularly effective for junior high students (seventh and eighth grades). Among the metacognitive skills, awareness of textual inconsistency and the use of self-questioning as both a monitoring and a regulating strategy were most effective. Reinforcement was the most effective teaching strategy.

Haller, E. P., Child, D. A., & Walberg, H. J. (1988). Can comprehension be taught? A quantitative synthesis of “metacognitive” studies. Educational researcher, 17(9), 5-8.

Teacher training, teacher quality and student achievement

The authors study the effects of various types of education and training on the ability of teachers to promote student achievement.

Harris, D. N., & Sass, T. R. (2011). Teacher training, teacher quality and student achievement. Journal of Public Economics, 95(7–8), 798-812.

Visible learning: A synthesis of over 800 meta-analyses relating to achievement

Hattie’s book is designed as a meta-meta-study that collects, compares and analyses the findings of many previous studies in education. Hattie focuses on schools in the English-speaking world but most aspects of the underlying story should be transferable to other countries and school systems as well. Visible Learning is nothing less than a synthesis of more than 50.000 studies covering more than 80 million pupils. Hattie uses the statistical measure effect size to compare the impact of many influences on students’ achievement, e.g. class size, holidays, feedback, and learning strategies.

Hattie, J. (2008). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. New York, NY: Routledge.

Variability in reading ability gains as a function of computer-assisted instruction method of presentation

This study examines the effects on early reading skills of three different methods of

presenting material with computer-assisted instruction.

Johnson, E. P., Perry, J., & Shamir, H. (2010). Variability in reading ability gains as a function of computer-assisted instruction method of presentation. Computers and Education, 55(1), 209–217.

Retention and nonretention of at-risk readers in first grade and their subsequent reading achievement

Some of the specific reasons for the success or failure of retention in the area of reading were examined via an in-depth study of a small number of both at-risk retained students and comparably low skilled promoted children

Juel, C., & Leavell, J. A. (1988). Retention and nonretention of at-risk readers in first grade and their subsequent reading achievement. Journal of Learning Disabilities, 21(9), 571-580.

Identifying Specific Learning Disability: Is Responsiveness to Intervention the Answer?

Responsiveness to intervention (RTI) is being proposed as an alternative model for making decisions about the presence or absence of specific learning disability. The author argue that there are many questions about RTI that remain unanswered, and radical changes in proposed regulations are not warranted at this time.

Kavale, K. A. (2005). Identifying specific learning disability: Is responsiveness to intervention the answer?. Journal of Learning Disabilities, 38(6), 553-562.

Sustaining Research-Based Practices in Reading: A 3-Year Follow Up

This study examined the extent to which the reading instructional practices learned by a

cohort of teachers who participated in an intensive, yearlong professional development

experience during the 1994-1995 school year have been sustained and modified over time.

Klingner, J. K., Vaughn, S., Tejero Hughes, M., & Arguelles, M. E. (1999). Sustaining research-based practices in reading: A 3-year follow-up. Remedial and Special Education, 20(5), 263-287.

Teachers’ Essential Guide to Formative Assessment

Like we consider our formative years when we draw conclusions about ourselves, a formative assessment is where we begin to draw conclusions about our students' learning. Formative assessment moves can take many forms and generally target skills or content knowledge that is relatively narrow in scope (as opposed to summative assessments, which seek to assess broader sets of knowledge or skills).

Knowles, J. (2020). Teachers’ Essential Guide to Formative Assessment.

A self-regulated flipped classroom approach to improving students’ learning performance in a mathematics course.

In this paper, a self-regulated flipped classroom approach is proposed to help students schedule their out-of-class time to effectively read and comprehend the learning content before class, such that they are capable of interacting with their peers and teachers in class for in-depth discussions.

Lai, C. L., & Hwang, G. J. (2016). A self-regulated flipped classroom approach to improving students’ learning performance in a mathematics course. Computers and Education, 100, 126–140.

Pulling back the curtain: Revealing the cumulative importance of high-performing,

This study examines the relationship between two dominant measures of teacher quality, teacher qualification and teacher effectiveness (measured by value-added modeling), in terms of their influence on students’ short-term academic growth and long-term educational success (measured by bachelor’s degree attainment).

Lee, S. W. (2018). Pulling back the curtain: Revealing the cumulative importance of high-performing, highly qualified teachers on students’ educational outcome. Educational Evaluation and Policy Analysis, 40(3), 359–381.

Reading on grade level in third grade: How is it related to high school performance and college enrollment.

This study uses longitudinal administrative data to examine the relationship between third- grade reading level and four educational outcomes: eighth-grade reading performance, ninth-grade course performance, high school graduation, and college attendance.

Lesnick, J., Goerge, R., Smithgall, C., & Gwynne, J. (2010). Reading on grade level in third grade: How is it related to high school performance and college enrollment. Chicago: Chapin Hall at the University of Chicago, 1, 12.

Cost-effectiveness and educational policy.

This article provides a summary of measuring the fiscal impact of practices in education

educational policy.

Levin, H. M., & McEwan, P. J. (2002). Cost-effectiveness and educational policy. Larchmont, NY: Eye on Education.

Do Pay-for-Grades Programs Encourage Student Academic Cheating? Evidence from a Randomized Experiment

Using a randomized control trial in 11 Chinese primary schools, we studied the effects of pay-for-grades programs on academic cheating. We randomly assigned 82 classrooms into treatment or control conditions, and used a statistical algorithm to determine the occurrence of cheating.

Li, T., & Zhou, Y. (2019). Do Pay-for-Grades Programs Encourage Student Academic Cheating? Evidence from a Randomized Experiment. Frontiers of Education in China, 14(1), 117-137.

A Theory-Based Meta-Analysis of Research on Instruction.

This research synthesis examines instructional research in a functional manner to provide guidance for classroom practitioners.

Marzano, R. J. (1998). A Theory-Based Meta-Analysis of Research on Instruction.

A New Era of School Reform: Going Where the Research Takes Us.

This monograph attempts to synthesize and interpret the extant research from the last 4 decades on the impact of schooling on students' academic achievement.

Marzano, R. J. (2001). A New Era of School Reform: Going Where the Research Takes Us.

Classroom Instruction That Works: Research Based Strategies For Increasing Student Achievement

This is a study of classroom management on student engagement and achievement.

Marzano, R. J., Pickering, D., & Pollock, J. E. (2001). Classroom instruction that works: Research-based strategies for increasing student achievement. Ascd

Improving education through standards-based reform.

This report offers recommendations for the implementation of standards-based reform and outlines possible consequences for policy changes. It summarizes both the vision and intentions of standards-based reform and the arguments of its critics.

McLaughlin, M. W., & Shepard, L. A. (1995). Improving Education through Standards-Based Reform. A Report by the National Academy of Education Panel on Standards-Based Education Reform. National Academy of Education, Stanford University, CERAS Building, Room 108, Stanford, CA 94305-3084..

Blended learning report

This research report presents the findings of this formative and summative research effort.

Murphy, R., Snow, E., Mislevy, J., Gallagher, L., Krumm, A., & Wei, X. (2014). Blended learning report. Austin, TX: Michael and Susan Dell Foundation.

Nation’s report card

How did U.S. students perform on the most recent assessments? Select a jurisdiction and a result to see how students performed on the latest NAEP assessments.

National Assessment of Education Progress (NAEP). (2020) Nation’s report card. Washington, DC: National Center for Education Statistics, Institute of Education Sciences, U.S. Department of Education

The Nation's Report Card: Math Grade 4 National Results.

To investigate the relationship between students’ achievement and various contextual factors, NAEP collects information from teachers about their background, education, and training.

National Assessment of Educational Progress (NAEP). (2011a). The nation's report card: Math grade 4 national results. Retrieved from http://nationsreportcard.gov/math_2011/ gr4_national.asp?subtab_id=Tab_3&tab_id=tab2#chart

The Nation's Report Card: Reading Grade 12 National Results

How did U.S students perform on ht most recent assessment?

National Assessment of Educational Progress (NAEP). (2011b). The nation's report card: Reading grade 12 national results. Retrieved from http://nationsreportcard.gov/ reading_2009/gr12_national.asp?subtab_id=Tab_3&tab_id=tab2#

An Introduction to NAEP

This non-technical brochure provides introductory information on the development, administration, scoring, and reporting of the National Assessment of Educational Progress (NAEP). The brochure also provides information about the online resources available on the NAEP website.

The nation’s report card: Grade 12 reading and mathematics 2009 national and pilot state results

Twelfth-graders’ performance in reading and mathematics improves since 2005. Nationally representative samples of twelfth-graders from 1,670 public and private schools across the nation participated in the 2009 National Assessment of Educational Progress (NAEP).

National Center for Education Statistics (NCES). (2010b). The nation’s report card: Grade 12 reading and mathematics 2009 national and pilot state results. (NCES 2011-455). Retrieved http://nces.ed.gov/nationsreportcard/pdf/main2009/2011455.pdf

Data Explorer for Long-term Trend.

The Data Explorer for the Long-Term Trend assessments provides national mathematics and reading results dating from the 1970s.

National Center for Education Statistics (NCES). (2011a). Data explorer for long-term trend. [Data fle]. Retrieved from http://nces.ed.gov/nationsreportcard/lttdata/

Students Meeting State Proficiency Standards and Performing at or above the NAEP Proficient Level: 2009.

Percentages of students meeting state proficiency standards and performing at or above the NAEP Proficient level, by subject, grade, and state: 2009

National Center for Education Statistics (NCES). (2011f). Students meeting state profciency standards and performing at or above the NAEP profcient level: 2009. Retrieved from http://nces.ed.gov/nationsreportcard/studies/statemapping/2009_naep_state_table.asp

The nation's report card: Writing 2011

In this new national writing assessment sample, 24,100 eighth-graders and 28,100 twelfthgraders engaged with writing tasks and composed their responses on computer. The assessment tasks reflected writing situations common to both academic and workplace settings and asked students to write for several purposes and communicate to different audiences.

National Center for Education Statistics. (2012). The nation's report card: Writing 2011 (NCES 2012-470).

Effects of the National Institute for School Leadership’s Executive Development Program on school performance in Pennsylvania: 2006-2010 pilot cohort results.

This study examined the impact of EDP on student achievement in Pennsylvania schools

from 2006-2010. It updates and extends a prior evaluation (Nunnery, Ross, & Yen, 2010a) study

of this same cohort from 2006-2009.

Nunnery, A. J., Yen, C., & Ross, S. M. (2010). Effects of the National Institute for School Leadership’s Executive Development Program on school performance in Pennsylvania: 2006-2010 pilot cohort results. Norfolk, VA: Old Dominion University, Center for Educational Partnerships. Retrieved from https://files.eric.ed.gov/fulltext/ED531043.pdf

PISA 2018 Results (Volume I): What Students Know and Can Do.

PISA 2009 Results: Learning Trends. Changes in Student Performance Since 2000 (Volume V)

This volume of PISA 2009 results looks at the progress countries have made in raising student performance and improving equity in the distribution of learning opportunities.

Organisation for Economic Co-operation and Development (OECD). (2010a). PISA 2009 results: Learning trends–Changes in student performance since 2000 (Volume V). Retrieved from https://www.oecd-ilibrary.org/education/pisa-2009-results-learning-trends_9789264091580-en

PISA 2009 Results: Overcoming social background–Equity in learning opportunities and outcomes

Volume II of PISA's 2009 results looks at how successful education systems moderate the impact of social background and immigrant status on student and school performance.

Organisation for Economic Co-operation and Development (OECD). (2010b). PISA 2009 results: Overcoming social background–Equity in learning opportunities and outcomes (Volume II). Retrieved from http://dx.doi.org/10.1787/9789264091504-en

PISA 2006 Technical Report

The OECD’s Programme for International Student Assessment (PISA) surveys, which take place every three years, have been designed to collect information about 15-year-old students in participating countries.

Organization for Economic Co-operation and Development (OECD). (2006). PISA 2006 technical report. Retrieved from http://www.oecd.org/pisa/pisaproducts/42025182.pdf

PISA 2009 Results: What students know and can do–Student performance in reading, mathematics

and science (Volume I)

This first volume of PISA 2009 survey results provides comparable data on 15-year-olds' performance on reading, mathematics, and science across 65 countries.

Organization for Economic Co-operation and Development (OECD). (2010c). PISA 2009 results: What students know and can do–Student performance in reading, mathematics and science (Volume I). Retrieved from http://dx.doi.org/10.1787/9789264091450-en

Getting back on track: The effect of online versus face-to-face credit recovery in Algebra I on high school credit accumulation and graduation

This research brief is one in a series for the Back on Track Study that presents the findings regarding the relative impact of online versus face-to-face Algebra I credit recovery on students’ academic outcomes, aspects of implementation of the credit recovery courses, and the effects over time of expanding credit recovery options for at-risk students.

Rickles, J., Heppen, J., Allensworth, E., Sorenson, N., Walters, K., & Clements, P. (2018). Getting back on track: The effect of online versus face-to-face credit recovery in Algebra I on high school credit accumulation and graduation. American Institutes for Research, Washington, DC; University of Chicago Consortium on School Research, Chicago, IL. https://www.air.org/system/files/downloads/report/Effect-Online-Versus-Face-to-Face-Credit-Recovery-in-Algebra-High-School-Credit-Accumulation-and-Graduation-June-2017.pdf

LESSONS; Testing Reaches A Fork in the Road

CHILDREN take one of two types of standardized test, one ''norm-referenced,'' the other ''criteria-referenced.'' Although those names have an arcane ring, most parents are familiar with how the exams differ.

Rothstein, R. (2002, May 22). Lessons: Testing reaches a fork in the road. New York Times. http://www.nytimes.com/2002/05/22/nyregion/lessons-testing-reaches-a-fork-in-the-road. html

Building Capacity to Implement and Sustain Effective Practices to Better Serve Children

This article provides an overview of contextual factors across the levels of an educational system that influence implementation.

Schaughency, E., & Ervin, R. (2006). Building Capacity to Implement and Sustain Effective Practices to Better Serve Children. School Psychology Review, 35(2), 155-166. Retrieved from http://eric.ed.gov/?id=EJ788242

The Foundations of Educational Effectiveness

This book looks at research and theoretical models used to define educational effectiveness with the intent on providing educators with evidence-based options for implementing school improvement initiatives that make a difference in student performance.

Scheerens, J. and Bosker, R. (1997). The Foundations of Educational Effectiveness. Oxford:Pergmon

Improvement on WASL Carries Asterisk

Scores went up in all grades and subjects this year on the Washington Assessment of Student Learning (WASL). But how much depends on how you look at them.

Shaw, L. (2004, September 2). Improvement on WASL carries asterisk. Seattle Times.

A Consumer’s Guide to Evaluating a Core Reading Program Grades K-3: A Critical Elements Analysis

A critical review of reading programs requires objective and in-depth analysis. For these reasons, the authors offer the following recommendations and procedures for analyzing critical elements of programs.

Simmons, D. C., & Kame’enui, E. J. (2003). A consumer’s guide to evaluating a core reading program grades K-3: A critical elements analysis. Retrieved December, 19, 2006.

The effects of direct instruction flashcard and math racetrack procedures on mastery of basic multiplication facts by three elementary school students

The purpose of this study was to determine if a typical third-grade boy and fifth-grade girl and a boy with learning disabilities could benefit from the combined use of Direct Instruction (DI) flashcard and math racetrack procedures in an after-school program. The dependent variable was accuracy and fluency of saying basic multiplication facts.

Skarr, A., Zielinski, K., Ruwe, K., Sharp, H., Williams, R. L., & McLaughlin, T. F. (2014). The effects of direct instruction flashcard and math racetrack procedures on mastery of basic multiplication facts by three elementary school students. Education and Treatment of Children, 37(1), 77-93.

Overview of Formative Assessment

Effective ongoing assessment, referred to in the education literature as formative assessment or progress monitoring, is indispensable in promoting teacher and student success. Feedback through formative assessment is ranked at or near the top of practices known to significantly raise student achievement. For decades, formative assessment has been found to be effective in clinical settings and, more important, in typical classroom settings. Formative assessment produces substantial results at a cost significantly below that of other popular school reform initiatives such as smaller class size, charter schools, accountability, and school vouchers. It also serves as a practical diagnostic tool available to all teachers. A core component of formal and informal assessment procedures, formative assessment allows teachers to quickly determine if individual students are progressing at acceptable rates and provides insight into where and how to modify and adapt lessons, with the goal of making sure that students do not fall behind.

States, J., Detrich, R. & Keyworth, R. (2017). Overview of Formative Assessment. Oakland, CA: The Wing Institute. http://www.winginstitute.org/student-formative-assessment.

Progress Monitoring as Essential Practice Within Response to Intervention

Response to Intervention depends on regular, routine monitoring of student progress. This paper describes a multi-component approach to monitoring progress.

Stecker, P. M., Fuchs, D., & Fuchs, L. S. (2008). Progress Monitoring as Essential Practice Within Response to Intervention. Rural Special Education Quarterly, 27(4), 10-17.

Using Curriculum-Based Measurement to Improve Student Achievement: Review of Research

This article reviews the efficacy of curriculum-based measurement as a methodology for enhancing student achievement in reading and math. Variables that contribute to the benefit of curriculum-based measurement are discussed.

Stecker, P. M., Fuchs, L. S., & Fuchs, D. (2005). Using Curriculum-Based Measurement to Improve Student Achievement: Review of Research. Psychology in the Schools, 42(8), 795-819.

Using Progress-Monitoring Data to Improve Instructional Decision Making

In order to determine effectiveness of instruction teachers require data about the effects of instruction. With these data teachers are able to make adjustments to instruction when progress is not being made.

Stecker, P. M., Lembke, E. S., & Foegen, A. (2008). Using Progress-Monitoring Data to Improve Instructional Decision Making. Preventing School Failure, 52(2), 48-58.

Keeping RTI on track: How to identify, repair and prevent mistakes that derail implementation

Keeping RTI on Track is a resource to assist educators overcome the biggest problems associated with false starts or implementation failure. Each chapter in this book calls attention to a common error, describing how to avoid the pitfalls that lead to false starts, how to determine when you're in one, and how to get back on the right track.

Vanderheyden, A. M., & Tilly, W. D. (2010). Keeping RTI on track: How to identify, repair and prevent mistakes that derail implementation. LRP Publications.

On the Academic Performance of New Jersey's Public School Children: I. Fourth and Eighth Grade Mathematics in 1992

This report describes the first of a series of researches that will attempt to characterize the performance of New Jersey's public school system.

Wainer, H. (1994). On the Academic Performance of New Jersey's Public School Children: I. Fourth and Eighth Grade Mathematics in 1992. ETS Research Report Series, 1994(1), i-17.

Productive teaching

This literature review examines the impact of various instructional methods

Walberg H. J. (1999). Productive teaching. In H. C. Waxman & H. J. Walberg (Eds.) New directions for teaching, practice, and research (pp. 75-104). Berkeley, CA: McCutchen Publishing.

Teacher use of interventions in general education settings: Measurement and analysis of? the independent variable

This study evaluated the effects of performance feedback on increasing the quality of implementation of interventions by teachers in a public school setting.

Witt, J. C., Noell, G. H., LaFleur, L. H., & Mortenson, B. P. (1997). Teacher use of interventions in general education settings: Measurement and analysis of ?the independent variable. Journal of Applied Behavior Analysis, 30(4), 693.

Can Rapid Assessment Moderate the Consequences of High-Stakes Testing

The author makes the case that rapid assessment can identify struggling students who can then be provided intensive instruction so their performance on high stakes tests is improved.

Yeh, S. S. (2006). Can Rapid Assessment Moderate the Consequences of High-Stakes Testing. Education & Urban Society, 39(1), 91-112.

High-Stakes Testing: Can Rapid Assessment Reduce the Pressure

The author reports data suggesting that the systematic use of formative assessment can reduce the pressure on teachers that they experience with high stakes testing.

Yeh, S. S. (2006). High-stakes testing: Can rapid assessment reduce the pressure?. Teachers College Record, 108(4).

The Cost-Effectiveness of Comprehensive School Reform and Rapid Assessment

The author compares the effectiness of comprehensive school reform relative to rapid progress monitoring. Progress monitoring results in much greater benefit than comprehensive school reform.

Yeh, S. S. (2008). The Cost-Effectiveness of Comprehensive School Reform and Rapid Assessment. Education Policy Analysis Archives, 16(13), 1-32.

The Cost-Effectiveness of Replacing the Bottom Quartile of Novice Teachers Through Value-Added Teacher Assessment

The authors examine the effectiveness of replacing low performing teachers relative to using formative assessment as a means of increasing student outcomes.

Yeh, S. S., & Ritter, J. (2009). The Cost-Effectiveness of Replacing the Bottom Quartile of Novice Teachers Through Value-Added Teacher Assessment. Journal of Education Finance, 34(4), 426-451.

Defining and measuring academic success.

This paper conducts an analytic literature review to examine the use and operationalization of the term in multiple academic fields

York, T. T., Gibson, C., & Rankin, S. (2015). Defining and measuring academic success. Practical Assessment, Research & Evaluation, 20(5), 1–20. Retrieved from https://scholarworks.umass.edu/pare/vol20/iss1/5/

Self-regulated learning and academic achievement: An overview.

This overview presents a general definition of self-regulated academic learning and identifies the distinctive features of this capability for acquiring knowledge and skill.

Zimmerman, B. J. (1990). Self-regulated learning and academic achievement: An overview. Educational Psychologist, 25(1), 3–17.