How big will be the impact of an intervention?

Why is this question important? There are many challenges for the average educator in interpreting and using evidence when making critical decisions. Perhaps, the greatest obstacle is understanding statistics. For decades statistical significance has provided important information that enables educators to know whether the impact of an intervention is coincidental or was caused by the experimental intervention. But practitioners need to know more. They need to know how large the impact might be if a new practice is adopted. This paper explains how effect size can play an important part in meeting this need.

See further discussion below.

Source: It's the Effect Size, Stupid - What effect size is and why it is important, 2002

Results:

The paper offers a description on how to calculate effect size as well as how to interpret the results. The paper is helpful in offering examples from common practices to support claims including the intervention descriptions, outcomes measures, effect sizes, and authors of studies. Coe argues that effect size can assist researchers and practitioners go beyond whether an intervention works to understand how large an impact will be if the practice is implemented in the field. High effect sizes offer a unique method for decision makers to employ in making the choice to expend precious resources.

Implications:

Coe is a proponent of the use of effect size to report the effect of interventions in social sciences. Effect size is increasingly used in research, especially in a meta-analysis. As increasing numbers of academics and policy makers including the What Works Clearinghouse recommend the use of effect size, it is important for educators to become familiar with this critical tool to understand published research.

Authors: Robert Coe

Publisher: Centre for Evaluation and Monitoring, Durham University England

Study Description: The paper shows how effect size can be used to understand the impact of educational interventions as well as a way to quantify the differences between two groups. Effect size goes beyond statistical significance by looking at the magnitude of the effect of an intervention, how big is the effect of the innovation. The paper shows how to calculate and interpret effect size, using a number of examples. It also warns that effect size is not about cause and effect but about differences between groups. The paper also looks at alternative methods that try to examine the same issues as size effect.

The paper is divided into the following sections:

- Why do we need effect size?

- How is it calculated?

- How can effect sizes be interpreted?

- What is the relationship between ‘effect size' and ‘significance'?

- What is the margin for error in estimating effect sizes?

- How can knowledge about effect sizes be combined?

- What other factors influence effect size?

- Are there alternative measures of effect size?

- Conclusions

Definitions:

- Effect Size: Effect Size: A standardized measure of the effect of an intervention (treatment) on an outcome. The effect size represents the change (measured in standard deviations) in an average outcome that can be expected if that person is given the treatment. Because effect sizes are standardized, they can be compared across studies.

- Statistical Significance: Statistical significance refers to the probability that a result occurred by chance alone. The term "significance" when used in general speech suggests that something is important or meaningful. On the other hand, “statistical significance” signifies that the results of a study are probably true, did not likely occur by chance, and the statistics are reliable. It does not imply the result is important or has any practical value in making decisions.

Citation: Paper presented at the Annual Conference of the British Educational Research Association, University of Exeter, England, 12-14 September 2002

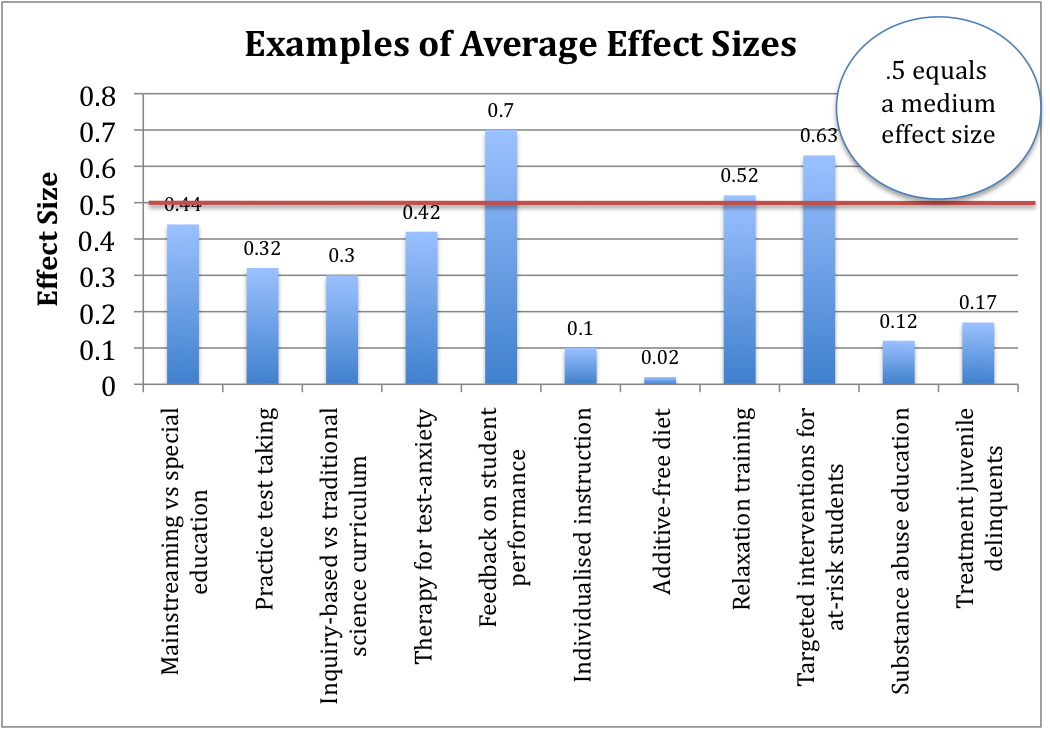

|

Intervention |

Outcome |

Effect Size |

Source |

|

Mainstreaming vs special education (for primary age, disabled students) |

Achievement |

0.44 |

Wang and Baker (1986) |

|

Practice test taking |

Test Scores |

0.32 |

Kulik, Bangert and Kulik (1984) |

|

Inquiry-based vs traditional science curriculum |

Achievement |

0.3 |

Shymansky, Hedges and Woodworth (1990) |

|

Therapy for test-anxiety (for anxious students) |

Test performance |

0.42 |

Hembree (1988) |

|

Feedback to teachers about student performance (students with IEPs) |

Student Achievement |

0.7 |

Fuchs and Fuchs (1986) |

|

Individualized instruction |

Achievement |

0.1 |

Bangert, Kulik and Kulik (1983) |

|

Additive-free diet |

Children's hyperactivity |

0.02 |

Kavale and Forness (1983) |

|

Relaxation training |

Medical symptoms |

0.52 |

Hyman et al. (1989) |

|

Targeted interventions for at-risk students |

Achievement |

0.63 |

Slavin and Madden (1989) |

|

School-based substance abuse education |

Substance use |

0.12 |

Bangert-Drowns (1988) |

|

Treatment programs for juvenile delinquents |

Delinquency |

0.17 |

Lipsey (1992) |